We Need Breadth-First AI Safety Plans

http://mdickens.me/2026/06/01/breadth-first_AI_safety_plans/ Depth-first plans lay out a path from here to aligned superintelligent AI. We need those kinds...

Sentient Welfare Across Three Futures

http://mdickens.me/2026/05/25/three_futures_sentient_welfare/ Three categories of futures, depending on how AI goes: ASI timelines are long. ASI timelines...

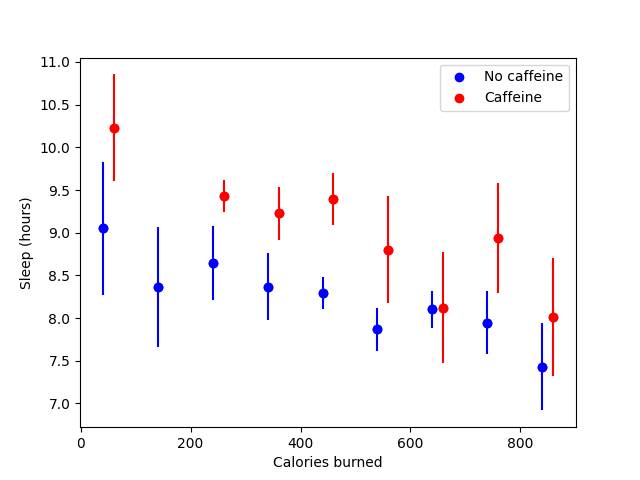

I sleep less when I exercise more

http://mdickens.me/2026/05/18/I_sleep_less_when_I_exercise_more/ They say exercise improves sleep quality. Is that true for me? To test this hypothesis, I...

Donation Timing Under Uncertainty About AI Timelines

http://mdickens.me/2026/05/11/donation_timing_given_AI_timelines/ A few years back, I got a big pile of money from working at a tech startup. I put a lot of...

Thoughts on investing for transformative AI

http://mdickens.me/2026/05/04/investing_for_transformative_ai/ TLDR: I basically don’t. more Contents Contents Ethical concerns Thoughts on how to avoid...

I'm extremely worried that superintelligent AI will kill everyone

http://mdickens.me/2026/04/27/worried_about_ASI/ I’d guess maybe a 50% chance that we’re all dead within 5–20 years because somebody will build...

I was wrong: concentrated factor portfolios don't have alpha

http://mdickens.me/2026/04/20/I_was_wrong_concentrated_factor_portfolios_don't_have_alpha/ Previously, I wrote about how investors can simulate leverage via...

Can AI make advancements in moral philosophy by writing proofs?

http://mdickens.me/2026/04/13/can_AI_write_moral_philosophy_proofs/ If civilization advances its technological capabilities without advancing its wisdom, we...

Pausing AI Is the Best Answer to Post-Alignment Problems

http://mdickens.me/2026/04/11/pause_for_post-alignment_problems/ Even if we solve the AI alignment problem, we still face post-alignment problems, which are...

By Strong Default, ASI Will End Liberal Democracy

http://mdickens.me/2026/04/06/by_strong_default_ASI_will_end_liberal_democracy/ The existence of liberal democracy—with rule of law, constraints on...

The Future Will Be Weirder Than That

http://mdickens.me/2026/03/29/future_will_be_weirder_than_that/ Many people in the animal welfare community treat AI as a powerful but normal technology, in...

Which is better for sentient beings: an "ethical" AI or a corrigible AI?

http://mdickens.me/2026/03/28/which_is_better_for_animals_value_lock-in_or_corrigibility/ Cross-posted to the EA Forum. An aligned ASI can be “ethical”1 (it...

An argument for why aligned ASI wouldn't be bad for animals

http://mdickens.me/2026/03/27/resource_constraints_argument_why_aligned_AI_wouldn't_be_bad_for_animals/ In the far future, why would people use up precious...

List of ideas for improving animal welfare in light of transformative AI

http://mdickens.me/2026/03/26/quick_ideas_animal_welfare_in_light_of_ASI/ If transformative AI arrives soon, what interventions might improve animal welfare...

I used to think aligned ASI would be good for all sentient beings; now I don't know what to think

http://mdickens.me/2026/03/25/I_used_to_think_aligned_ASI_would_be_good_for_sentient_beings/ Epistemic status: Speculating with no central thesis. This post...

Cost-effectiveness model for AI alignment-to-animals vs. alignment-in-general

http://mdickens.me/2026/03/24/alignment-to-animals_BOTEC/ Last September, I wrote: There’s a (say) 80% chance that an aligned(-to-humans) AI will be good for...

Which types of AI alignment research are most likely to be good for all sentient beings?

http://mdickens.me/2026/03/23/which_types_of_alignment_research_are_good_for_all_sentient_beings/ AI alignment is typically defined as the task of aligning...

Worlds where we solve AI alignment on purpose don't look like the world we live in

http://mdickens.me/2026/03/20/worlds_where_we_solve_alignment_on_purpose/ (Or: Why I don’t see how the probability of extinction could be less than 25% on...

Value Investing in the Age of AGI

http://mdickens.me/2026/03/11/value_investing_agi/ Introduction Most people who write about AI and investing fall into one of two camps: traditional...

.png)

The Structural Return Argument Against Value Investing

http://mdickens.me/2026/03/02/structural_return_argument_against_value_investing/ Value investing had a singularly bad run from 2007 to 2020. (And it hasn’t...