Simulating Queueing

I read a great post this week from Marc Brooker: SFQ: Simple, Stateless, Stochastic Fairness. In it, Marc does a great job of explaining a cute little algorithm for isolating different customers from each other in a multitenant system. You should go read that post first.

I was curious to improve my intuition on how it is that this algorithm works so I wrote some simulations to see how it behaves and to observe the steps that Marc lays out actually making an improvement.

Let's describe the experiment setup:

- We have

nclients. - Each of those clients submit their requests to the balancer.

- The balancer either enqueues the message or drops it.

- The queues are a fixed (O(1) in the number of clients) set of channels, which for a given message, the balancer chooses one of to attempt to enqueue in.

- The processor is a single-threaded worker that plucks messages out of the queue in round-robin order.

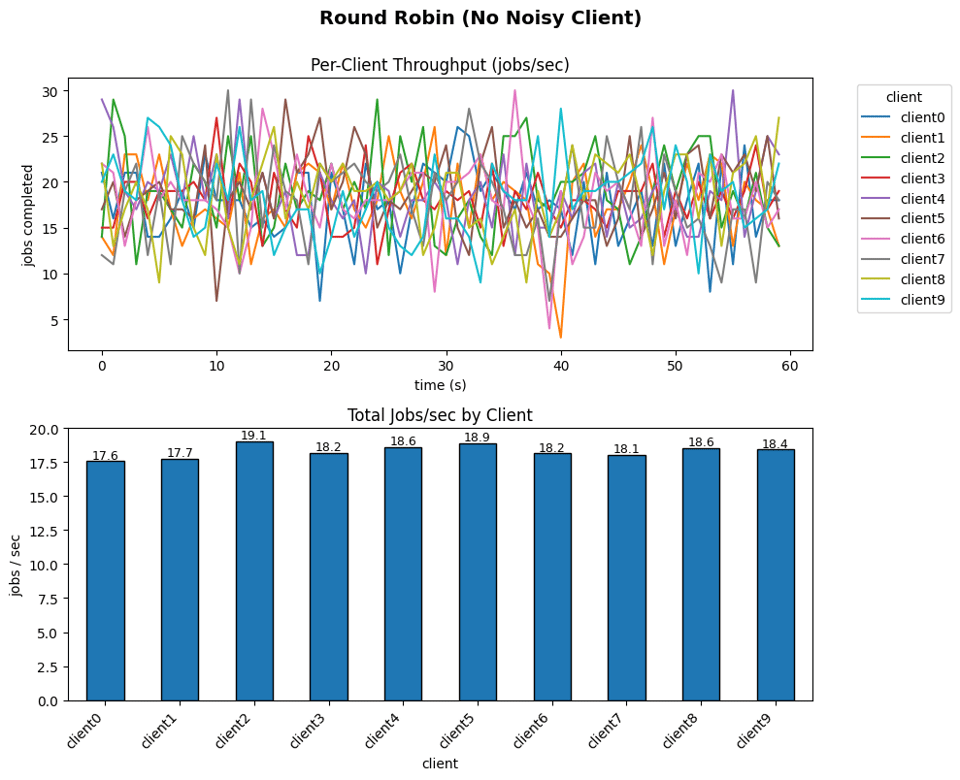

A naive approach to implementing this might just be to round-robin the messages ourselves:

func (b *roundRobinBalancer) submit(v job) bool {

i := b.next.Add(1) - 1

ch := b.outChans[i%uint64(len(b.outChans))]

select {

case ch <- v:

return true

default:

return false

}

}

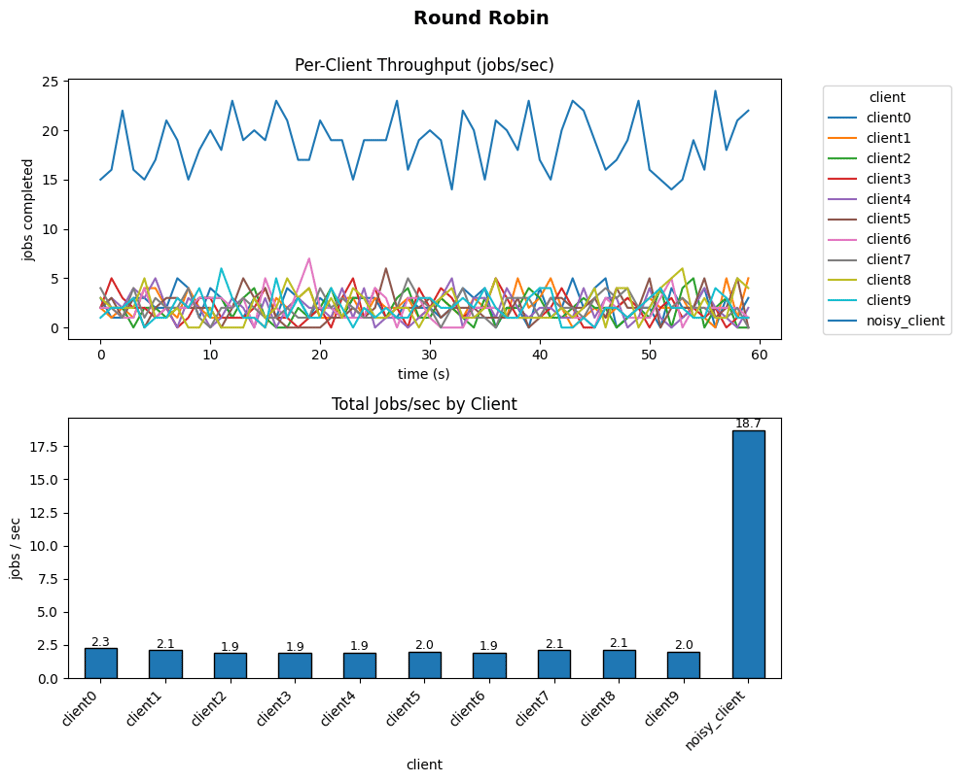

This comes with all of the problems you might expect: a single noisy client monopolizes the time of the processing thread because it drowns out all of the other, well-behaved clients:

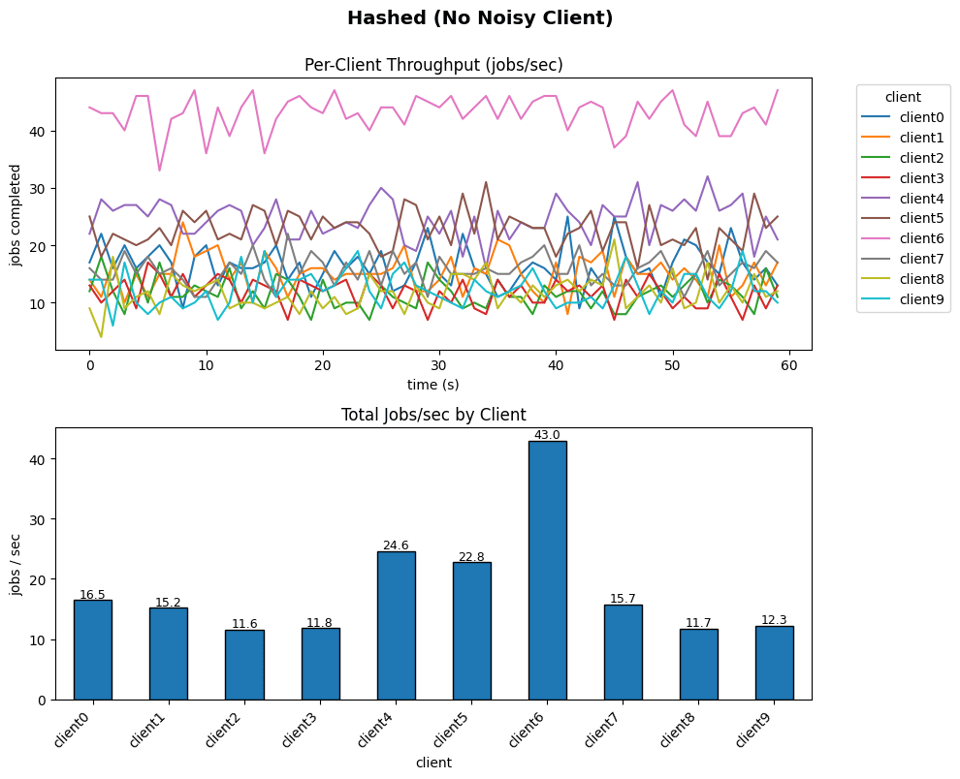

The next step to improve this situation is to restrict each client to a single queue: we hash their ID to decide which channel they'll use:

func (b *hashedBalancer) submit(v job) bool {

h := fnv.New32a()

binary.Write(h, binary.LittleEndian, int32(v.clientId))

i := int(h.Sum32()) % len(b.outChans)

select {

case b.outChans[i] <- v:

return true

default:

return false

}

}

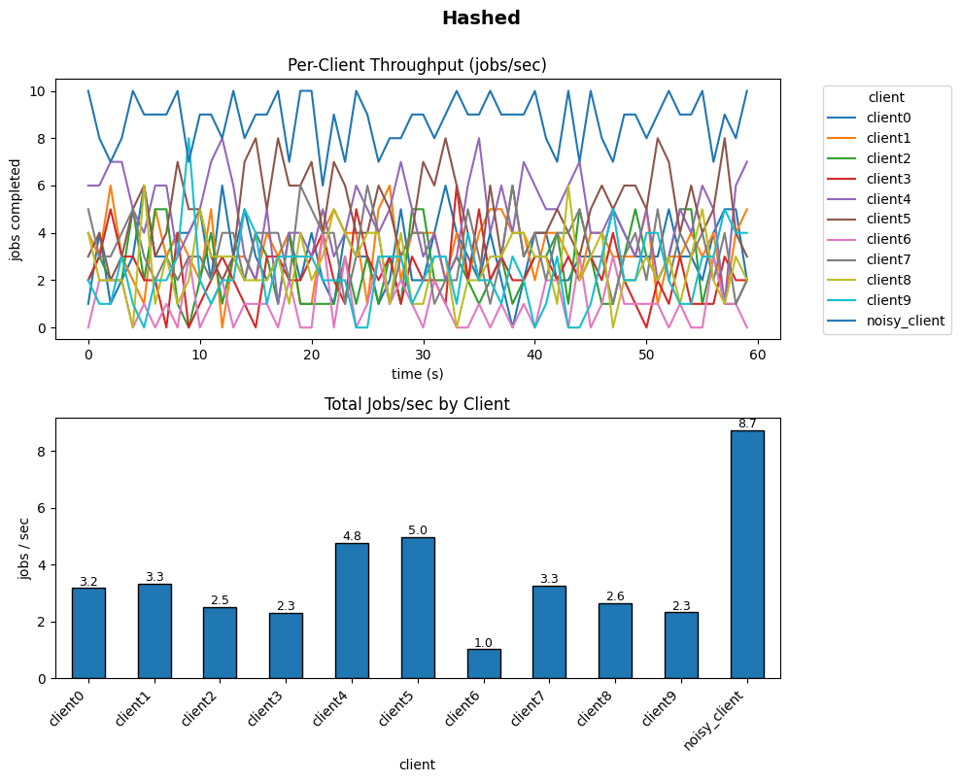

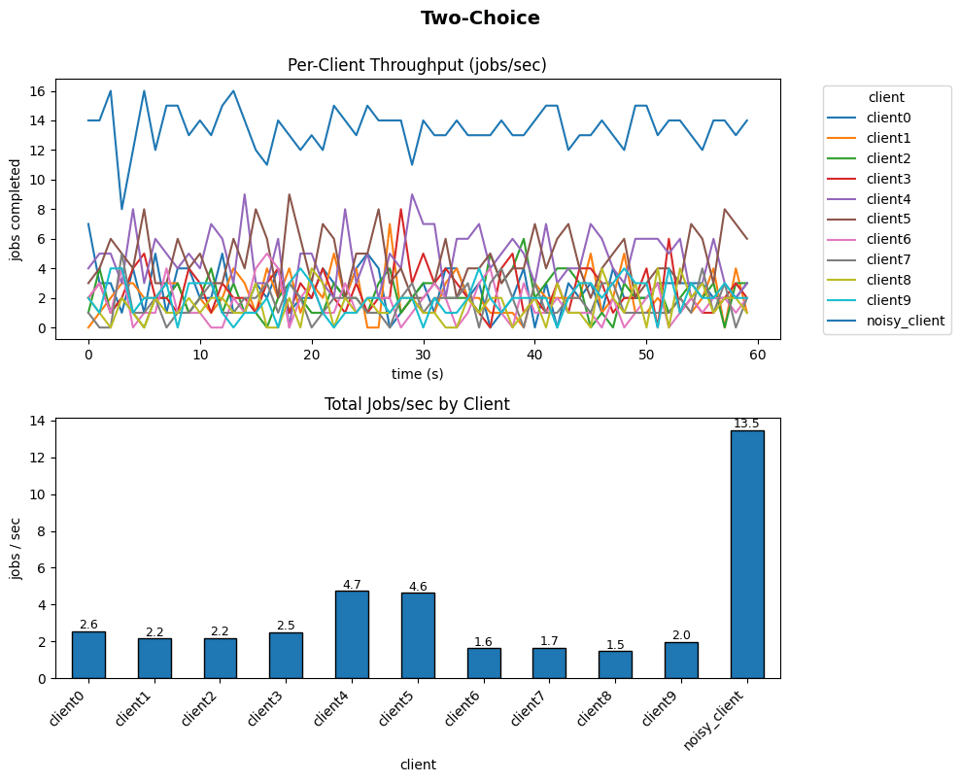

This looks a lot better along some axes; the entire work of the processor is no longer monopolized by the single noisy client, it's shared a bit more by the rest of the clients as well. It's still not great, though. If you were unlucky enough to get sharded onto the same queue as the noisy client, then you have the same problems as before, we can see this in the fact that there's a disparity in the work that gets done by different clients:

Poor client6 in the noisy simulation must have gotten sharded onto the same queue as noisy_client, since it could barely get any work done.

The next step is to give some choice, we pull a "power-of-two choices" trick, which is to assign via hashing two queues to each client, and then have them choose the less-loaded one. This is a well known tool that reduces the expected size of the most full queue, and in our particular situation, gives clients an opportunity to avoid being matched up with the noisy client, they'd need to be matched up twice. And, we could in fact do more than just two, the technique generalizes just fine, but in traditional queueing problems, two is a sweet spot.

func (b *twoChoiceBalancer) submit(v job) bool {

n := len(b.outChans)

var buf [4]byte

binary.LittleEndian.PutUint32(buf[:], uint32(v.clientId))

i := int(xxh3.Hash(buf[:]) % uint64(n))

var j int

for attempt := uint32(1); ; attempt++ {

binary.LittleEndian.PutUint32(buf[:], (attempt<<16)|uint32(v.clientId))

j = int(xxh3.Hash(buf[:]) % uint64(n))

if j != i {

break

}

}

if len(b.outChans[j]) < len(b.outChans[i]) {

i = j

}

select {

case b.outChans[i] <- v:

return true

default:

return false

}

}

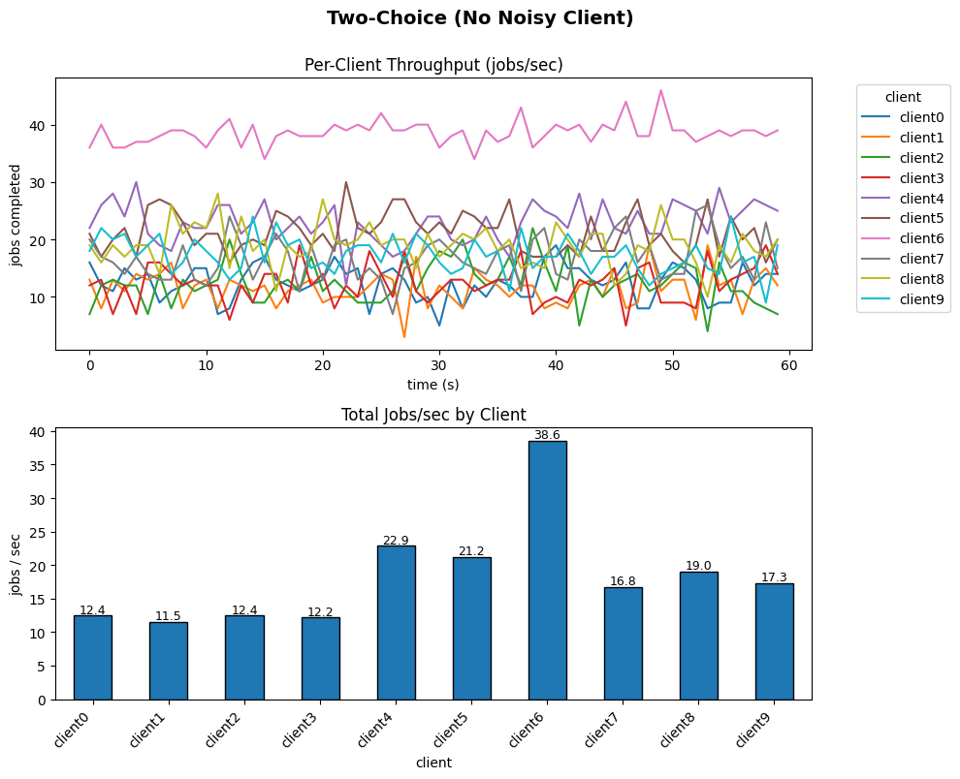

Running this simulation, we see that things are even better than before, but it's still possible for some clients to get much luckier with their assignments than others:

clients 4 and 5 here were likely assigned relatively quieter queues than some of the other clients.

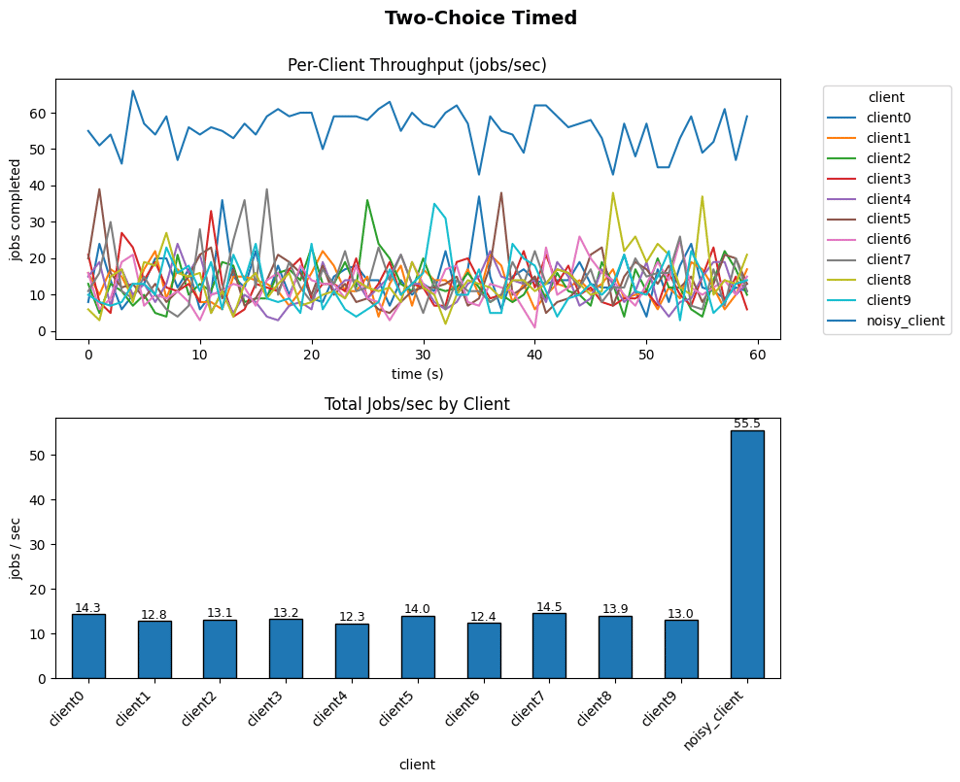

The last part of the trick from Marc's post is to periodically reshuffle the queue assignments. We can simulate this by including a current epoch alongside the other inputs to our hash function. We see this once again smooths out the total throughput for all the clients, and the impact on the addition of the noisy client is not as intense as it is for the other approaches:

func (b *twoChoiceTimedBalancer) submit(v job) bool {

n := len(b.outChans)

sec := int32(now())

var buf [8]byte

binary.LittleEndian.PutUint32(buf[0:4], uint32(sec))

binary.LittleEndian.PutUint32(buf[4:8], uint32(v.clientId))

i := int(xxh3.Hash(buf[:]) % uint64(n))

var j int

for attempt := uint32(1); ; attempt++ {

binary.LittleEndian.PutUint32(buf[4:8], (attempt<<16)|uint32(v.clientId))

j = int(xxh3.Hash(buf[:]) % uint64(n))

if j != i {

break

}

}

if len(b.outChans[j]) < len(b.outChans[i]) {

i = j

}

select {

case b.outChans[i] <- v:

return true

default:

return false

}

}

This is my recommendation that if you do systems-y adjacent stuff it's good fun to learn how to use Jupyter and to come up with a workflow that lets you easily simulate stuff like this. It's a great way to mess around with these kinds of algorithms and learn things and build intuition. I should emphasize that I think the important thing is the building of the simulations; don't settle for someone else's flashy visualizer, even mine! That's a trap! What I am providing you in this newsletter is entertainment, not education!

Add a comment: