Writing a Transpiler, Pt. 1

OCrab: A Rust to OCaml Transpiler for Improving the OCaml Ecosystem.

About a month ago, I started writing a program called OCrab

This program is known as a translator, a source-to-source compiler, or a transpiler.

The entire purpose of a transpiler is to take code from one language and turn it into code for another language.

In tour case, from Rust to OCaml code.

Why would we want to do this?

Why Rust to OCaml

OCaml is a functional programming language used in certain sectors of industry, mainly in finance.

It is used quite a bit in the creation of other language, such as Rust.

It's a garbage collected language that compiles to native code and it's syntax is high level

OCaml, potentially (more on this later), lets you do low level operations safely.

Why?

OCaml is, by default, immutable.

Its also incredibly safe because of its type system, that also has type inference (it can infer what types you're using)

A small group of us are working to improve the OCaml ecosystem

We believe its a fantastic language to use rather than languages like Python or Javascript.

The problem is, OCaml doesn't have direct access to operating system resources, such as interacting with hardware, or controlling files and processes.

For a language, theres a few ways to accomplish this.

We could do something similar to Golang, where we make system calls (syscalls)[^1].

Go manages its process in goroutines, which are managed by the Go runtime.

The runtime is written in C and statically linked to the compiled code during the linking phase.

Since Go manages its own runtime, not directly with the operating system, it implements syscalls.

However, syscalls aren't portable.

For every new version of a kernel, code needs to be ported over.

Instead, most languages choose to use libc, a portable library that abstracts away the process of making syscalls into C function code other programs can call.

Which is exactly what we're doing.

We face another problem, though.

There are many platforms that support libc.

Rust's libc alone supports 106 platforms.

That over 100k lines of code to move over to OCaml.

To do that by hand would be insanely tedious and error prone.

Not to mention how terrible maintaining that code would be.

So we're writing OCrab, taking Rust's libc definitions and translating them to an OCaml libc!

How do we do that, though?

Under the hood

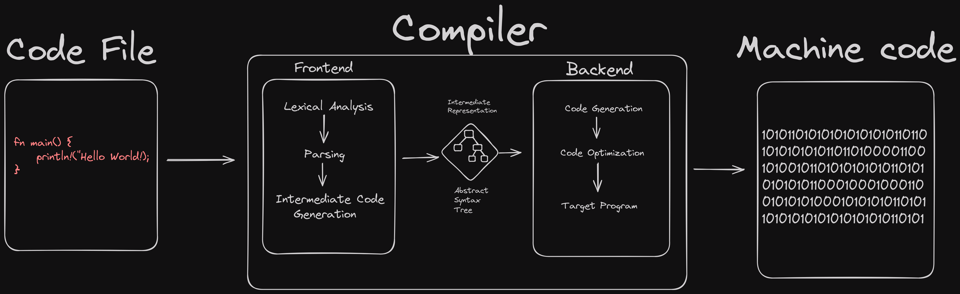

Its important to understand how compilers work.

Compilers are composed of two parts, frontends and backends.

Frontends take care of lexical analysis, parsing, and intermediate code generation.

Backends take care of code generation, code optimization, and target program output.

In OCrab, we use a Rust crate called syn, which takes care of the lexical analysis and parsing.

Our job is to generate the intermediate code into a data structure called the abstract syntax tree (AST).

ASTs are tree representations of code used by compilers to all of their analysis and code generation.

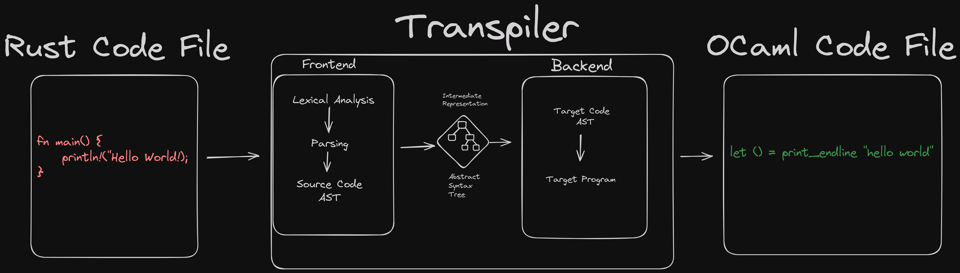

Our diagram translation flow for a transpiler looks slightly different, because we're going from one code file to another.

In essence, we're going from Rust code file -> Rust AST -> OCaml AST -> OCaml code file.

We started writing OCrab in the simplest way possible.

Take in a Rust code file, read the syntax, and output the result.

We made a blank Rust file called empty.rs and fed it to the program, to see what we would get.

&syntax = File {

shebang: None,

attrs: [],

items: [],

}

Interesting, it seems we get back a File struct.

I'm guessing this represents our Rust AST.

To confirm my suspicions, I check with the syn library docs.

Sure enough, since syn takes care of our analysis/parsing, it conveniently gives a struct called syn::File .

This struct represents our whole AST for Rust.

pub struct File {

pub shebang: Option<String>,

pub attrs: Vec<Attribute>,

pub items: Vec<Item>,

}

Curious, I took one of the constant definitions from the Rust libc library to see what the output would be.

All we're doing here is defining a public constant called AF_UNSPEC with a type ::c_int and assigning the value 0.

pub const AF_UNSPEC: ::c_int = 0;

When I ran OCrab again, I was shocked!

&syntax = File { // <-- Layer 0

shebang: None,

attrs: [],

items: [

Item::Const { // <-- Layer 1

attrs: [],

vis: Visibility::Public(

Pub,

),

const_token: Const,

ident: Ident(

AF_UNSPEC,

),

generics: Generics {

lt_token: None,

params: [],

gt_token: None,

where_clause: None,

},

colon_token: Colon,

ty: Type::Path { // <-- Layer 2

qself: None,

path: Path { // <-- Layer 3

leading_colon: Some(

PathSep,

),

segments: [ // <-- Layer 4

PathSegment {

ident: Ident( // <-- Layer 5

c_int,

),

arguments: PathArguments::None,

},

],

},

},

eq_token: Eq,

expr: Expr::Lit {

attrs: [],

lit: Lit::Int {

token: 0,

},

},

semi_token: Semi,

},

],

}

That's a huge tree!

One small line of code produced this large of an AST.

I came to quickly understand ASTs are just nested structures within nested structures, many layers deep.

Lets look at that File struct to understand whats going on.

File only has three items to represent a Rust code file:

shebangattrsitems

For now, we wont worry about the first two attributes.

We're only concerned with items since it has everything we need.

#[non_exhaustive]

pub enum Item {

Const(ItemConst),

Enum(ItemEnum),

ExternCrate(ItemExternCrate),

Fn(ItemFn),

ForeignMod(ItemForeignMod),

Impl(ItemImpl),

Macro(ItemMacro),

Mod(ItemMod),

Static(ItemStatic),

Struct(ItemStruct),

Trait(ItemTrait),

TraitAlias(ItemTraitAlias),

Type(ItemType),

Union(ItemUnion),

Use(ItemUse),

Verbatim(TokenStream),

}

Item has 16 different variant types.

Since we defined a const, it used the Item::Const type to parse our code.

Looking into Item::Const gives us:

pub struct ItemConst {

pub attrs: Vec<Attribute>,

pub vis: Visibility,

pub const_token: Const,

pub ident: Ident,

pub generics: Generics,

pub colon_token: Colon,

pub ty: Box<Type>,

pub eq_token: Eq,

pub expr: Box<Expr>,

pub semi_token: Semi,

}

That means we have to parse every single item in our simple constant definition.

In total, there are 8 parts being parsed:

Parsing an AST is similar to pulling back the layers of an onion.

The deeper you go into the AST, the more you have to parse.

Fortunately, Rust makes this manageable with its enums and pattern matching.

For now, I'm going to leave the newsletter here.

In the next issue, we'll go over the code to show how OCrab is parsing Rust to OCaml code.

Let me know if this newsletter was too short, too long, or just right!

If you have any ideas for future issues, please let me know!

Thanks for reading!

- Glitchbyte