(by zoë)

or: a case for creating technologies that work for human beings rather than simply hyperscaling and refining the terrible shit we already do

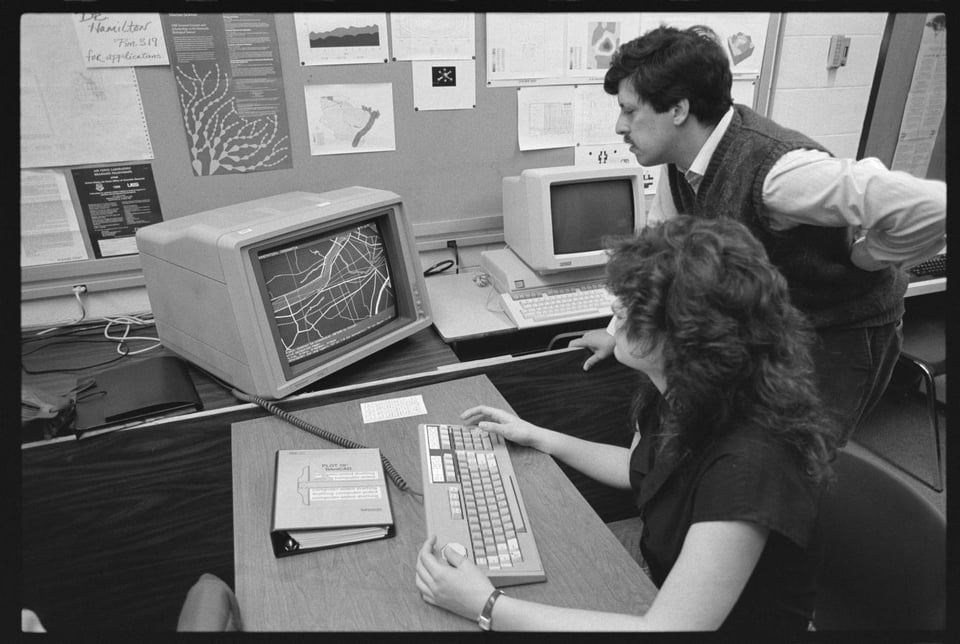

After my uncle's coal mine closed, he got a job working at a rural telecom company, which is how I ended up with an Internet connection in 1996. My mom and I had just moved to a new house and she had him install the dial-up modem in her "home office", where the Intel PC running Windows 3.1 lived. I had already had the computer for a few years before we connected it to the Internet, so I knew how to use it and was interested to see what I would find online. I remember a conversation with my father about what Netscape Navigator was.

One of the first things I remember doing is trying to find information about the Spice Girls. I ended up in a Spice Girls chat room, where I saw my first printed swear word when somebody typed "Spice suck ass" in the chat. Terrified, I closed the window, because I was six years old. I wasn't sure what would happen if someone knew I saw the word "ass" on the computer. Would I get in trouble? Would someone tell the police?

I remember this as the moment when I suddenly, intuitively understood that the Internet was a social space. I was also presented with a choice: do I tell someone what I saw, and that it made me uncomfortable? What would happen? Little girls who said the word "ass" at my school would be made to stay inside for recess. I knew to say "suck ass" was a terrible thing. It also alarmed and concerned me that anyone could say anything so untoward about the pretty women who sang "Wannabe." But I don't think I said anything to anyone. I just have been carrying this memory for almost thirty years, not realizing until more recently how precious it actually is.

You see – thirty years online is a long time. Our boundaries with devices were better back then, and very few of my experiences were then mediated through the Internet. As all elder milennials remember nostalgically, the networked computer used to only exist in its own room or space in our homes. We used to have to make choices about when and how to engage with it, and how to deal with the inherent social complexities of networked communication. Now, much of the space we engage in is fully automated and curated and surveilled and managed. But there is no automation for how to handle difficult, formative social situations or friction. You have to try things, you have to make choices. Sometimes you make a choice to not do something, to disengage from someone or from a task. You make a choice to stop. You make the choice to take a break, to go get a coffee, to send a message to a friend instead. And it is essential that we have the opportunities to make those types of choices – and importantly to create spaces where young people, or anyone who is engaged in a process of self-actualization, can make mistakes, fuck up, get support, and learn what good boundaries look like. Fully automated, mediated spaces deny us all those opportunities.

The process of denying those opportunities began in more specific spaces – for example, military targeting and missile guidance – and its spread was relatively slow. But it feels to me that it hit us all at once as we began to use this mediated space for literally everything and carrying it with us all the time in the form of a smartphone. The transformative nature of this is, I think, more seismic than most people realize. The resulting moral panic about devices, age verification, content, and the need of automations to manage the mediated space is predictable because the forms and functions of these technologies have not been considered holistically as the result of human choices.

It turns out that having good boundaries with the networked computer was completely a fluke of its contemporary limitations. Nobody intended us to have a deeply personal, well-considered, thoughtful relationship with our devices. I'm not saying that trying to maintain these boundaries makes us more virtuous. But it should raise deep, existential concern that our entire lives are wrapped up in the trajectory of this crucible, and that the question of our boundaries with it is presented as mere individual lifestyle choice rather than the consequence of a structural reality.

I say crucible because everything has been fully transmuted by the networked computer and curiously, its ubiquity has not made it better understood, nor has it made anyone more comfortable with it, really – even and perhaps especially those of us who can't really imagine our lives without it. The transformational nature of new technologies is well-documented throughout history, and people love to make analogies about the Internet and the printing press. But I think this type of pop history really undersells how humans are the ones who create and propagate technologies, that each technology and its implementation are the result of human choices, not simply inertia that could not be stopped. Not that inertia is irrelevant – I have certainly had the experience of actively deciding to do something and having it suddenly feel like it has already gotten away from me, that it was happening to someone else. But I think in the historical sense, humanity tends to dissociate from its structural choices, and we mistake this for inertia. Much like you or I feel outside of our bodies when something traumatic is happening or when we are remembering it, I don't think we have integrated our understanding of our recent history with a material, structural appraisal of how we have implemented and propagated computer technology. The legacy of the computer itself and the networked computer is undeniably soaked in blood. But we choose to elide this at our peril. And it is a choice.

Though that legacy of death and destruction is not unique in human history – we really have excelled at creating new and innovative types of mass violence, including psychological violence, with the networked computer. We get to experience everything that is happening on Earth if we want to, in real time. Surely everyone has thought that they lived in an interesting, historically unique, definitive time – and of course they did, and we do. But the immediate global nature of recording history – its scalability – necessarily arises with telecommunications and the networked computer. Without it, none of this would be possible – and this type of immediacy and automation arose, primarily, out of an arms race.

Charles Babbage discovered that you could do a lot of math really, really quickly with a machine all the way back in 1822. It was a little over 100 years later that the Nazis discovered their own depraved fetish of doing a lot of math really, really quickly – to painstakingly keep records of a genocide (thanks to contracts with IBM to use their mechanical systems). Much of what we now know to be a packet-switching network – the Internet – is owed solely to government military research during the Cold War. This isn't to say that these technologies would not have come to pass in a different timeline, but this is the timeline we have. Military organizations, acting with imperialist and exterminationist goals, invested untold amounts of money in these technologies and ensured they were captured to fulfill their specific needs. They did not grow and develop or "catch on" until they were captured and propagated for those needs. And it happens that those needs were, specifically, in service to capitalist accumulation and anticommunism. (Never forget that Henry Kissinger falsified computer reports of the bombs that were dropped on Cambodia during the Vietnam War – and they were supposed to be unassailable, because they were computer reports.) Anything that we got out of these technologies (fulfillment, education, arts, social relationships) was an unintended consequence.

This is to say nothing of the literal, material cost. The minerals required to make microprocessors and other computer parts all come from somewhere. The parts have to be manufactured, the devices assembled. Did someone hand-dig the cobalt in my phone's battery out of the ground? A child, perhaps? Most of these devices only last a few years before becoming e-waste, ending up in a landfill, lead and cadmium leaching into the water supply, the smell of burning electrical wire and plastic wafting through the air. Cancers grow and babies are stillborn, but these are problems that so-called developed countries expect to see in sub-Saharan Africa, for example. Or, say, Memphis, Tennessee. What the press calls "AI data centers" are massive warehouses of GPUs, doing what computers have always done – execute effective procedures. Except it needs to do a lot of them. If someone came up to you and said:

"We created this machine, which can recognize mathematical patterns at scale, to do a nonconsensual pattern recognition experiment on the entire planet. The thing is, people have used computers and written and visual language a lot, and we now have a really big data set that we can train the computer on to kind of have a pretend conversation with you and also make algorithmic guesses as to what is actually true in reality. Most of the time, it doesn't really work, and people don't like it. When people do like it, it tends to drive them to paranoid delusions and suicide, or at least decrease their cognitive functioning. One thing this computer program does really well is create unsettling images and encourage vulnerable people to isolate themselves and commit suicide."

If they said that – you probably wouldn't respond, "I absolutely think that this machine should be running 24/7 and we should devote all of the planet's fragile resources to operating this machine, and would like to help you build giant warehouses that pollute the environment to make sure that it can operate all the time." But the value proposition is something much different: that of efficiency and of freedom from the burden of being alive. To be alive is to experience friction – but automations and mediations reduce that friction, often prior to the point that a human, end user operator has realized that a decision is being made.

Ultimately, the United States is largely to blame. Is it any wonder that the country which birthed the silicon transistor, always willing to perpetuate and enable countless deaths and unending carnage under the banner of its national myths, should disgrace the Earth's gifts in this way? A little over 200 years since Charles Babbage saw the programmatic capabilities of the loom, the US tech industry has quite literally made society insane trying to come up with new extractive use cases for "lots of math really, really quickly." Mathematics is not descriptive or qualitative – its goals are representational. But in the case of the microprocessor and the networked computer, capitalism, in its desperation, has made a value proposition solely out of the scale at which the machine can do math – without any concern for whether or not that scalability results in a public good. More data, more math, is always better, even if that data represents bombs and recorded private phone calls and the bodies of dead or abused children, even if the hardware required to make these calculations is destroying the environment and making people miserable and isolating them from creative processes that enrich their souls.

Any theoretical project to improve our relationship to the networked computer is overwhelming in its scope, because the networked computer was not created or propagated to be a public good, and its capabilities have been effectively captured since its inception by the requirements of imperial, capitalist accumulation. There are real human beings in charge of this accumulation – and their job is to identify frontiers from which they can extract and enrich themselves. The networked computer has provided them with one such frontier. Mass surveillance and data collection, "artificial intelligence" (which is really, again, just a lot of math, really, really fast, and yet not fast enough for them somehow), and the erosion of our sense of safety and security in public space are all about the same thing. They are keeping that frontier open with a rib spreader and every gory thing about us is exposed and for sale.

The networked computer obfuscates from most people that this is happening, because it's a perfect structural representation of an ideology. Its forms and processes are malleable, but wildly codependent (which insulates them from transformative structural changes), and largely invisible to the general public. As Joseph Weizenbaum wrote:

Our society's growing reliance on computer systems that were initially intended to "help" people make analyses and decisions, but which have long since both surpassed the understanding of their users and become indispensable to them, is a very serious development. It has two important consequences. First, decisions are made with the aid of, and sometimes entirely by, computers whose programs no one any longer knows explicitly or understands. Hence no one can know the criteria or the rules on which such decisions are based. Second, the systems of rules and criteria that are embodied in such computer systems become immune to change, because, in the absence of a detailed understanding of the inner workings of a computer system, any substantial modification of it is very likely to render the whole system inoperative and possibly unrestorable. Such computer systems can therefore only grow. And their growth and the increasing reliance placed on them is accompanied by an increasing legitimation of their "knowledge base."

The networked computer's ability to structurally manage ideology through logical forms – as decided by human operators – has perhaps only become more pronounced since Weizenbaum published his book Computer Power and Human Reason, a staggering defense of human agency, in 1976. This is, in my view, mostly because the forms remain totally and utterly captured by capitalists, imperialists, and exterminationists, and every attempt to take those forms back from them, to change them into something more beneficial to the general public, as they are clearly capable of being, has been met with unholy tantrums and endless wailing. Unable to convince us that their complete capture of these forms is good, they reliably resort to creating moral panics.

Into the crucible goes the two things that they have decided are true:

"AI" platforms are a requirement for technological and social advancement; you must use them to avoid being "left behind"; they must be inserted into everything and come what may, this will continue. These systems are irresponsible – in the sense that no one is responsible for them and they simply cannot be controlled. When an LLM tells a teenager how to construct a noose with which to hang himself (and he succeeds), when a graphic designer's bills are overdue because no one wants to pay for her thoughtful work, when an automated tool flags a trans person's SFW selfie as "sexually explicit" on social media – all of these are acceptable consequences for this promise of "advancement."

At the same time, the networked computer is an incredibly dangerous place, and its access must be wholly controlled. Age and identity verification should be required not just to access specific content, but to operate the device. This is to "protect children" from the dangers of the networked computer.

These twin assumptions essentially abdicate any responsibility for the state of public space online – or the "digital commons." This is a natural progression of enclosure, the process of legally "enclosing" land and space away from the public and into private ownership, which began in England in the Middle Ages and has reverberated throughout subsequent history.

For most of humanity's time on Earth, common space was where we interacted with each other. The mediation of space into something that is more often than not privatized (and surveilled and managed) is an incredibly recent development. That this mediation and privatization is not necessarily undertaken with the public good in mind is obvious when you see who benefits and who suffers, or even that suffering is considered an acceptable or even necessary outcome. There is a direct line from enclosure to, say, Gavin Newsom throwing out a homeless person's belongings while destroying an encampment. The same concept applies when a company demands an identity and age verification process to use an operating system on a networked device (and almost all devices today are networked). Without public space and commons where we all depend on each other for the success of the project – whether it's grazing lands, a city block, or a Discord server – we fall out of practice in making real-time decisions about our behaviors and responsibilities to each other. And thus we rely on those who operate these irresponsible and abstracted systems to make those decisions for us. The same systems can also, quite easily, be manipulated to create nonconsensual pornography of minors. They will also present false information, algorithmically surface hate speech, and expose your private data. Nobody has a choice.

From my perspective, this is a disastrous outcome, because it deprives us all of the opportunity to make decisions about our lived reality. Children, who must learn decision-making and risk assessment in order to become responsible adults, need to have these opportunities, and they also need to know that if something bad does happen to them online, that they have a place to go and someone to talk to. Age verification systems and "artificial intelligence" are a signpost to them and to everyone that there is no mutual responsibility in these spaces anymore, and in fact mutual responsibility and the commons will be eradicated with prejudice because that would amount to a closing of the frontier. And despite diminishing returns, the frontier must remain open because a new one has not yet been discovered.

"Spice suck ass" was an ignoble beginning to my online life and there were many more such incidents – but also outstanding and beautiful things that happened to me online and made me, I think, into a relatively well-adjusted and empathetic adult. When I was about 12, I made friends with a 21-year-old college student through a forum, and we're still in touch today, over two decades later. There was nothing untoward about our friendship and he treated me like a little sister. I chatted with him about this recently on Bluesky, and he said that things are just different now, and so much worse. I certainly agree. I'm not trying to trade in nostalgia about how much better things were back then. The wheels of total capture by "irresponsible" systems were already in motion, and I certainly dealt with my fair share of cruel behavior online, whether it from was creeps on ICQ, LiveJournal rating communities, or Pittsburgh sportswriters. I had some bad times online, but I also had some very good ones.

Importantly, I also accessed a lot of information about technology, history, art, science, and culture that I might not have otherwise accessed, and talked about those topics with a wide variety of people from different backgrounds. In middle school I made a LiveJournal friend in Moscow; we sent each other packages in the mail with paper ephemera from our hometowns. I still text daily with people I met through sports Twitter, and in fact I married someone I met on Twitter. The digital commons was an important space for me as a kid and remains an important space for me as an adult – and I want to believe in a future where it can continue to be an important space for people of all ages.

The current standard – that what is happening to the digital commons is inevitable, and you will have to show your papers to access the slop hose, and the slop hose is required under the law – demands that we accede our minds and bodies and all the public space we inhabit as fully mediated, extractive frontiers. These spaces, then, are subject to the requirements of extraction, and we lose agency over them completely. Our spaces do not have to have safety or social norms around safety if minors aren't supposed to be there in the first place, because once you're 18 in the United States you no longer have the nominal right to it. But, if you're under 18, "safety" doesn't really mean anything in practice at scale except exclusion and censorship, practices which can be automated, regardless of the effectiveness of that automation.

It feels like an unseen hand places these technologies in our pockets and ensures that the networked computer is a requirement for participation in modern life – to not have one is to be disconnected and disadvantaged. And I do believe that access to a networked computer as a communications medium is important, something that everyone should have access to depending on their choice and need. But that requirement also begets a massive responsibility to the commons to ensure that common space serves the common good. Without common literacy and understanding of what this technological mediation does, and how it is shaped by its administrators' policy and practice, it really resembles nothing but an echoing, screaming void, which has only deepened and widened the longer it has been allowed to operate in these conditions.

The choices about what to put in the crucible were not made by the public commons. As Tara Tarakiyee wrote in their excellent essay "On the Enshittification of Audre Lorde" (linked at the bottom of this piece):

The enshittification story, at its most powerful, describes a process by which platforms that once served users well came to exploit them. But this framing assumes a prior state of genuine service, a golden age of the open internet, that was for many people never particularly golden. The early internet was structured around the assumptions of its architects: predominantly white, male, Western, educated, and abled. The exclusions weren't mere flaws; they were perhaps, if we're being generous, deeply structural tendencies that were never seriously interrogated.

The time for serious interrogation has long since passed, and the digital commons are in urgent need of community stewardship and care. We can make individual interventions to limit our screen time and our doomscrolling, and we can even make systemic interventions – poorly-thought-out age verification laws prove it can be done. But cudgels like age verification do not address the fact that harmful environments are structurally required by the current forms of the networked computer and its platforms, and their ultimate effect is to increase inequity, isolation, and surveillance. This is, of course, by design, and it is extremely important that we do not buy the value proposition that governments and technology companies present to us as they encroach upon what little commons we have left. They promise frictionless experiences, endless growth, and endless capability, without showing their work and without engaging with us as members of the commons.

What memory we might have of a functional digital commons is coincidental, a moment before the companies in charge of these mediations fully understood what they could gain from enclosure and from colonization. But I see the liberation of the digital commons as an urgent, necessary project that goes hand-in-hand with larger visions for a liberated society – and one that is easy to overlook when you're in the crucible all day, every day. We can create our own mediations, take them offline, and choose different forms.

Reading list:

Computer Power and Human Reason by Joseph Weizenbaum (ebook download here)

Palo Alto: A History of California, Capitalism, and the World by Malcolm Harris

"AI got the blame for the Iran school bombing. The truth is far more worrying" by Kevin T Baker

"On The Enshittification of Audre Lorde: 'The Master's Tools' in Tech Discourse" by Tara Tarakiyee

Peer to Peer: The Commons Manifesto by Michel Bauwens, Vasilis Kostakis, and Alex Pazaitis

You just read issue #9 of compatibility issues. You can also browse the full archives of this newsletter.