Programming Languages and AI, Jevons Paradox, Agent Skills

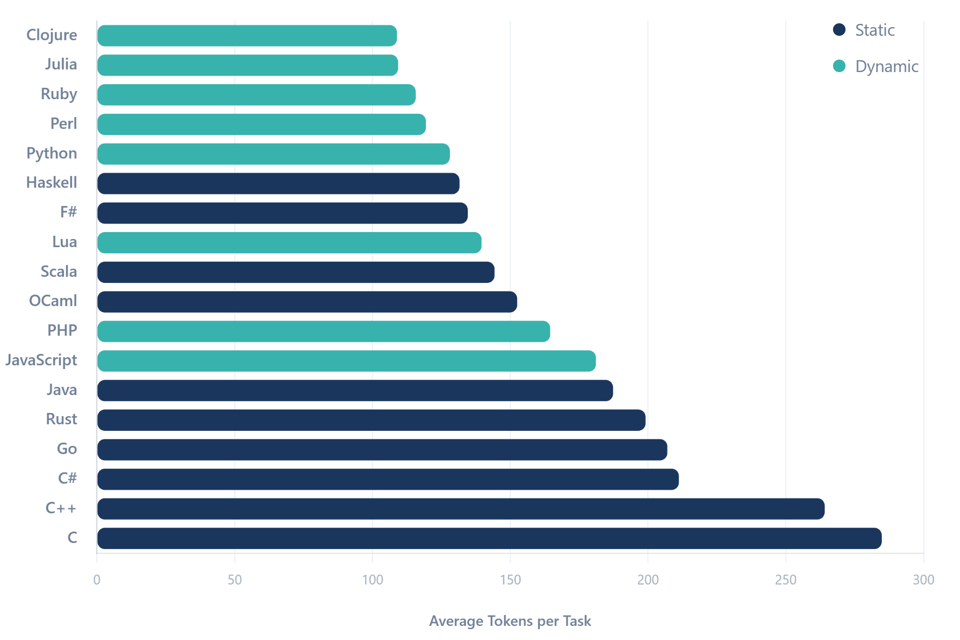

Which Programming Languages Are Best For AI

It seems that programming languages are becoming less relevant because of AI. Before LLMs, even just the style and vibe of a programming language was a deciding factor when choosing for example a library. Some languages are considered ugly (PHP), beautiful (Ruby), or very concise (functional languages, like Haskell or Clojure). Now the specific choice of language, other factors being equal, doesn’t really matter anymore because a lot of the syntax itself can be written and read by LLMs. But fundamentally, they are still working with different programming languages, so are there any advantages of working with say Python instead of C? In his article Which programming languages are most token-efficient? Martin Alderson has tried to quantify and compare this question from one perspective: which programming languages take up the least context for the same tasks? He found that overall:

Dynamically typed languages take up less tokens than strictly typed languages (reason: you don’t have any types).

Higher level languages are more efficient than lower level, systems languages

Jevons Paradox and AI

Jevons Paradox is an observation from 1865 that increased efficiency in coal usage (the Watt steam engine used less coal to generate the same power), did not decrease, but actually increased, the demand for coal. Because you needed less coal, it became suddenly worth it to use it in more processes, where it had been too expensive before.

Efficiency improvements don't reduce demand - they reveal latent demand that was previously uneconomic to address

— Addy Osmani1

Many people have now published their thoughts why they think Jevons Paradox will happen knowledge work, especially software development. Their theory basically is: because software will be cheaper, we will write much more of it. When looking at the past of software, this seems to hold up:

When moving from lower level programming languages (assembly), to higher level languages (C, C++, Java, Python) programmers became much more productive, but we also wrote much more software and employed more engineers.

Over the last 15y it has become easier to build web apps, native apps, etc. In addition to having more apps and engineers writing them, we also got a bigger software ecosystem (monitoring tools, hosting platforms, debugging and testing tools). So every tool also created demand for more tools.

The whole theory sounds nice and hopeful, and I personally hope it becomes true. But who knows.

My Cheap Vibecoding Workflow in The Past Week

I am currently building a small recipe tracker for me and my girlfriend using Ruby on Rails. But of course, most of the code is written by Claude Code on the 20$ plan. Recently I discovered another good coding agent: Amp Code. It is also command line based and includes 10$ of credits for Claude Opus 4.5/GPT 5 per day. I am finding the workflow of

Planning and developing the code in Claude Code until I think it’s ready, but leaving it uncommitted.

Hopping over to Amp Code and using GPT-5 for the code review and security analysis. For this, I use their Oracle tool (basically just a way of calling GPT-5 specifically for code analysis and review).

This has been surprisingly good, especially because (1) GPT 5.2 seems to be stronger in cyber security related topics, and (2) asking a different LLM often brings up some new perspectives.

What are Agent Skills in Claude Code and Codex?

You have probably already experienced, that even with the best models you still need really good context and prompts to get the best results. This problem is being more and more tackled by modern agents like Claude Code, but sometimes you still end up copy and pasting documentation, or by writing a lot of explanations. Agent Skills are a new open format that aims to help with this. They work in most of the assistants like Claude Code, Gemini, Code, etc.

A skill is basically just a markdown (plain text) file, potentially with a few attachments or additional scripts. Once you (or an AI) create and install a skill, the agent can automatically access it. This is really helpful for knowledge that is specific to your project or use case, for example

Specific domain knowledge.

Repeatable workflows with multiple steps, for example deploying a project.

Theming/style of your website and app.

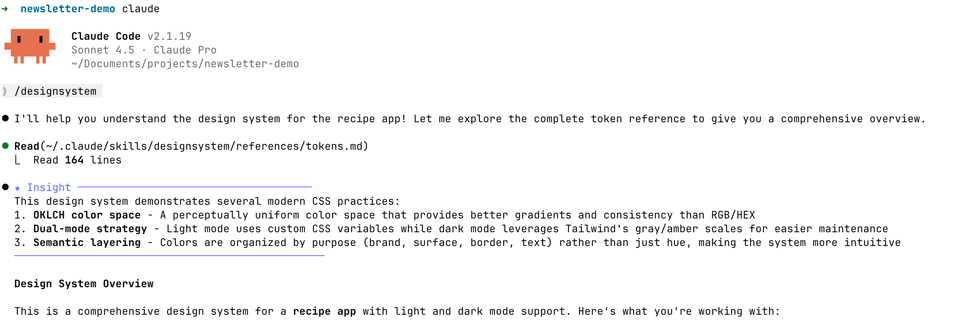

Skills are nice because you can either install pre-made skills by other people, or create your own. For example I have created a skill called /designsystem for my recipe tracker, which includes all the colors, fonts, and other design decisions. When I create a new view for the app, I can just write /designsystem, so the context is loaded in, and Claude Code can create a design that fits to the rest of the app (mostly).

For creating skills yourself, I first recommend installing the demo skills by Anthropic, those also include a skill-creator skill that is very helpful for creating custom ones.

Aside: Skills should have the ability to be loaded in automatically (based on the description). I have not found this to work, Opus just doesn’t seem to want to load them in. Therefore, I just manually type in the commands of the skills I want to use in a given session.

Other Articles, Resources, Tweets

If you speak German: Thorsten Ball, a co-founder of Amp Code, recently did an interview/podcast with Frauenhofer: Deep Dive mit Thorsten Ball über die Zukunft der Softwareentwicklung. They talk not only about AI, but also Europe, Germany, startups and career perspectives of young software developers.

Many big open source project are having a crisis right now. They are getting many pull requests every day, with low-quality AI code. For example, TLDRAW (web-based whiteboard) is now automatically closing pull from external contributors.

When code was hard to write and low-effort work was easy to identify, it was worth the cost to review the good stuff. If code is easy to write and bad work is virtually indistinguishable from good, then the value of external contribution is probably less than zero.

If that's the case, which I'm starting to think it is, then it's better to limit community contribution to the places it still matters: reporting, discussion, perspective, and care. Don't worry about the code, I can push the button myself.More details in Stay away from my trash!

ai never responds with "the code is fighting back against what we're trying to do, maybe we need to step back and figure out how to refactor things to make this easier"

Tweet by @thdrx, developer of OpenCode

This is a problem I also see more and more in my sessions. I think a proper plan/spec helps, but I have not figured it out completely. Maybe it is also much more inherent to the LLMs, than the tooling and prompting can fix.

You can read the original post on twitter: Every time we’ve made it easier to write software… - Addy Osmany ↩