The Weight of Avoidance

"Exploring the gap in measuring AI's effectiveness and advocating for honest benchmarks in AI capability."

One strategic signal 🔭

One (human) prompt 🧠

One subtraction opportunity ➖

Created by Sam Rogers · Powered by Snap Synapse

Freely available on Substack, LinkedIn, and our mailing list.

New issue every Monday.

🔭 Signal: The Measurement Gap

Organizations are spending more on AI in 2026 than ever. Budgets approved. Pilots running. Agents deploying. And yet the gap between perceived AI capability and actual AI capability is widening, not narrowing.

Almost nobody is measuring the thing that matters most: how well humans and AI actually work together.

Here's what I keep seeing firsthand. When people take a real behavioral assessment of their AI collaboration skills, two things happen:

- People are surprised and disappointed the score is lower than expected.

- They don't want to share it out. Not because the assessment is broken, but because their expectations were built on filtered self-reports instead of evidence.

Everyone assumed they'd score higher because everyone around them also assumed they'd score higher.

It's a collective hallucination with budget implications.

The people avoiding honest measurement aren't just uncomfortable. They're professionally exposed. When someone else does measure honestly, the contrast will be visible. And that someone is increasingly the person who gets the role, the budget, or the promotion.

The grace period for figuring this out quietly has expired. Filtered readiness is now a liability.

🧠 Strategic (Human) Prompt: The Number You're Avoiding

Instead of asking: How ready are we for AI?

Ask: What would we learn if we measured honestly?

Most AI readiness questions invite the answer people want to give. Try these instead:

- Where is your team's AI confidence highest, and has anyone verified it yet?

- What's one AI capability claim in your org that would embarrass someone if tested?

- If your competitor measured this honestly and you didn't, what would that cost you?

➖ Strategic Subtraction: Whack-a-Metric

Find one AI capability measure in your organization built on surveys, self-assessments, or unverified usage data. Whack it, then quick, before the next one can pop up!

Replace it with something behavioral: a task-based test, a workflow audit, a scored interaction. Anything that shows what people do rather than what they believe they do. I tend to recommend PAICE, but you can do this independently based on any real workflow with 50 people in your org and get useful results. That's what Anthropic just did last week to measure how AI impacts skill formation. It doesn't have to be hard.

The most dangerous metric in any org is the one everyone agrees on but nobody verified. Those numbers don't inform decisions. They decorate them.

If you can't replace it yet, at least label it honestly: "self-reported, unverified." Just watch how fast the conversation changes.

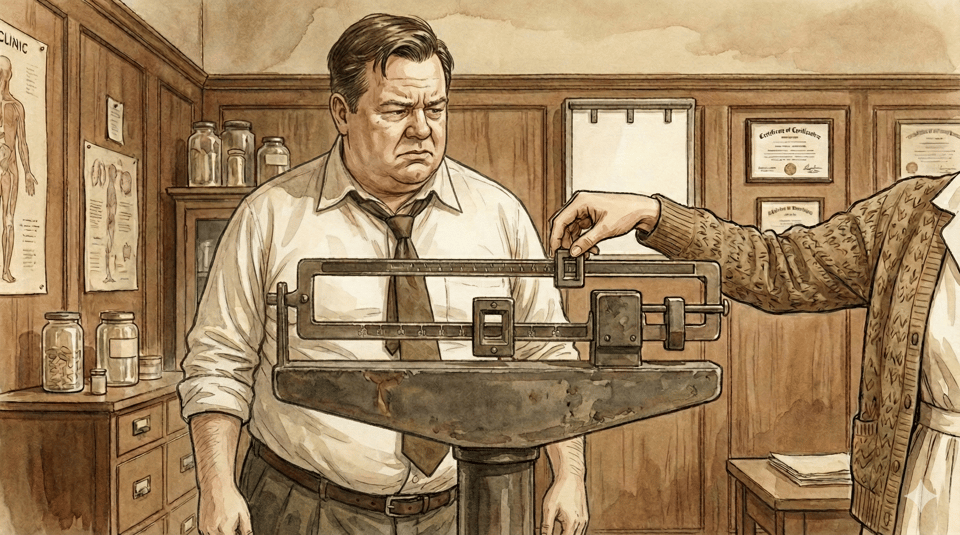

⚖️ Analogy of the Week: The Weigh-in

Most everyone knows the feeling.

We avoid the scale because we already know the number won't match the story we've been telling ourselves. "I feel fine. My clothes still (mostly) fit." So we skip the weigh-in on the bathroom scale at home.

But the weight doesn't care whether you step on the scale or not. It's still there. It compounds. And when you finally do step on, at the doctor's office or because something doesn't fit at the worst possible moment, the number is far worse than if we'd just looked when it was still manageable.

That's what organizations are doing with AI capability measurement right now. Avoiding the scale doesn't change the weight. It just guarantees the reckoning arrives at someone else's timing instead of ours.

The solution isn't the scale. It's the healthy willingness to step on it before someone else weighs you.

🎵 Closing Notes

2026 will not reward the orgs with the most AI tools. It'll reward the ones honest enough to measure what's actually working at the speed of AI. For more on this, read The Yes Problem which Markus Bernhardt and I released last week.

Meanwhile, the impossible-to-ignore Moltbook launched last week: a social network exclusively for AI agents. Tens of thousands of bots building communities, debating philosophy, developing emergent culture. Funny, six months ago I was sharing Semantics Complete, a YouTube project retrocast from the future about exactly this. Guess it was six months too early. Welcome to February 2026, when it seems nothing is too early or too crazy anymore.

Until next time,

Sam Rogers

Unfiltered Measurement Advocate

Snap Synapse – from AI promise to AI practice

PAICE.work measures how humans and AI actually collaborate, not how they think they do. Ready to help your team/organization get real traction?