The Copilot Problem

An ancient failure mode AI is exposing everywhere

One strategic signal 🔭

One (human) prompt 🧠

One subtraction opportunity ➖

Created by Sam Rogers · Powered by Snap Synapse

Freely available on Substack, LinkedIn, and our mailing list.

New issue every Monday.

🔭 Signal: When Politeness Becomes a Hazard

A recent WSJ story about a physician and an AI tool has sparked debate about whether AI belongs in clinical settings. But the incident pattern it describes isn't new, and it isn't about AI.

A high-status professional acts alone. An informal peer-check gets bypassed. A single source substitutes for consultation. Validation happens late or not at all. The error looks "reasonable" in context.

Calling this "misuse" is insufficient, and calling it "system failure" without naming roles is also incomplete.

What broke here wasn't judgment. It was structure. An unverified answer was allowed to bypass the validation loops that medicine already depends on everywhere else. The tool may be new, but the failure mode is ancient.

AI does make this worse, certainly. But in this case it's not because AI is uniquely dangerous. All it does here is collapse the buffer that once allowed this kind of structural gap to remain survivable. When tools are fast, fluent, and embedded in real workflows, delayed validation changes outcomes.

The question isn't whether AI should be trusted. It's why the system allowed a single source (any single source) to stand alone in a safety-critical decision.

Nothing here is exotic. And that's precisely the problem.

🧠 Strategic (Human) Prompt: Completion Criteria

Instead of asking "who made a mistake?" ask:

Did the system allow a single source to be sufficient?

If yes, the vulnerability existed before anyone touched a tool. The tool just found it faster.

This reframe matters because it shifts attention from blame to design. The practitioner isn't the failure point. The missing structure is.

➖ Subtraction: Default Trust

This week, consider subtracting one or more of the following:

The assumption that a single "human in the loop" prevents single-source failures

The belief that training changes behavior when time pressure is high

The habit of treating politeness and deference as neutral

The comfort of post-hoc accountability instead of structural prevention

If a high-stakes task can be completed alone, quickly, without contradiction, then eventually it will be. Not because practitioners are reckless, because systems that reward throughput will find every gap in validation structure.

These assumptions feel protective, but in fact they're dangerous.

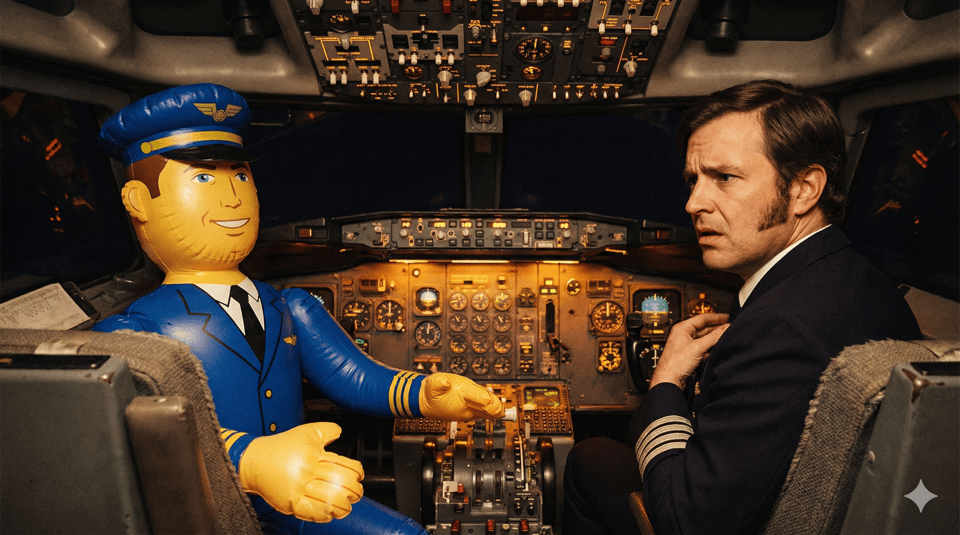

✈️ Analogy of the Week: Copilot Didn't Speak Up

Tenerife, Canary Islands, 1977.

Fog. Two 747s, same runway.

The KLM captain begins takeoff without clearance. His flight engineer questions it, but...tentatively. The captain dismisses him.

583 dead. Still the deadliest accident in aviation history, nearly 50 years later.

The inquiry didn't find a reckless pilot. It found a system where junior crew were trained to defer, and authority was allowed to complete actions unchallenged.

Aviation didn't fix this by telling copilots to be braver. They redesigned authority itself. Crew Resource Management made challenge structural. Checklists require two voices. Callouts require responses. Ever since then, silence is treated as a system failure, not a personality flaw.

The aviation rule change didn't make people any more courageous. They made courage unnecessary by making silence structurally impossible.

That's the design question AI is forcing on every other industry now.

♬ Closing Notes

AI didn't create single-point-of-failure workflows. It just removed the human slack that made them survivable.

Trainings, warnings, professional norms, and post-hoc accountability only work when:

time pressure is low

tools are slow

authority is distributed

errors surface early

AI breaks all those assumptions.

The debate over whether AI "belongs" in high-stakes settings misses the point. The structural gaps were already there. AI just operates fast enough to find them before humans can intervene.

The fix isn't better training or louder warnings. It's designing systems where no single source is ever sufficient, authority is intentionally incomplete, and validation is structural, not optional.

Until next week,

Sam Rogers

Structural Safety Advocate

Snap Synapse – from AI promise to AI practice

📅 Book a meeting

🎯 Measuring collaboration behaviors, not just individual capability: PAICE.work