Confidence & Calibration

Unpacking confidence saturation in AI, separating smarts from sound, and rewiring rewards.

One strategic signal 🔭

One (human) prompt 🧠

One subtraction opportunity ➖

Created by Sam Rogers · Powered by Snap Synapse

Freely available on Substack, LinkedIn, and our mailing list

New issue every Monday.

🔭 Signal: Confidence Saturation

For decades, confidence was a proxy for competence.

Then came AI, the machines that always sound sure.

Now confidence is practically ambient. Polished phrasing, instant citations, and synthetic eloquence flood every feed. We’ve never sounded smarter...or been less certain of anything.

Organizations built around poise and presentation are cracking under the pressure. When every intern and every chatbot can produce fluent, formatted answers, what actually counts as expertise?

There’s a measure confidence can’t fake: effectiveness. When it comes to AI, that often means:

how clearly people and AI understand each other

how quickly they recover from error

how consistently they calibrate

Yesterday’s soft skills have become tomorrow’s system checks. That’s why I created PAICE.work: an objective behavioral standard that measures people+AI collaboration in the flow of work. This wasn’t scalable before. Now it is.

Maybe the next great leadership skill isn’t projecting certainty at all, but knowing when to doubt yourself just enough to learn even faster.

🧠 Strategic (Human) Prompt: Pomp & Intelligence

How do you separate sounding smart from being smart when machines can do both?

➖ Strategic Subtraction: Winning the Con Game

Stop rewarding confidence; start rewarding calibration.

Confidence isn’t the problem when someone’s right. It’s the mismatch between certainty and accuracy that breaks things.

If someone’s right 80% of the time and 80% confident, great! But when they’re only right half the time and still 80% confident? Predictable chaos.

Check your own systems. Where is confidence being mistaken for performance? If you can’t see calibration, it probably isn’t happening.

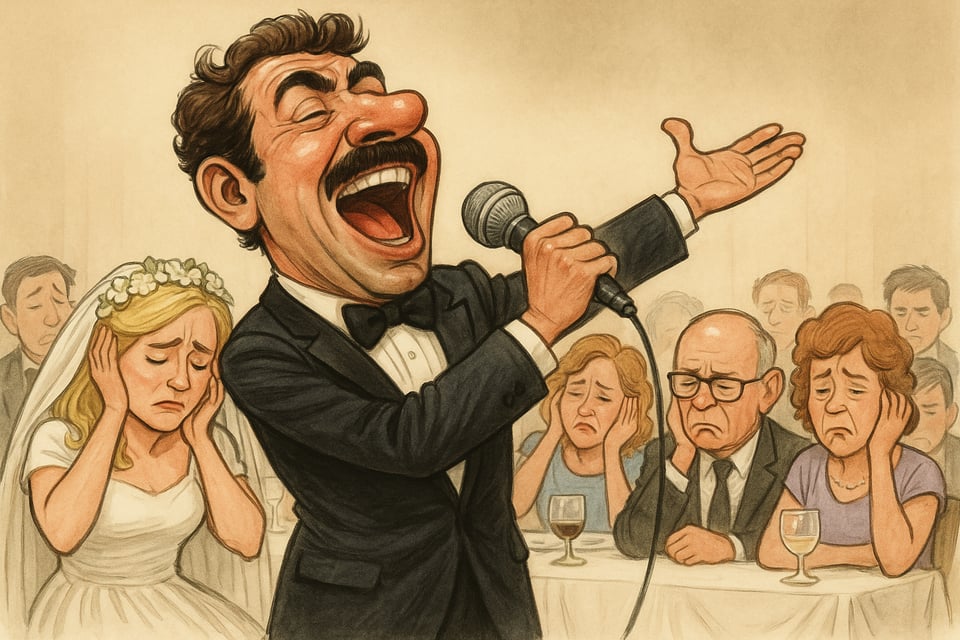

🎤 Analogy of the Week: Confidently Off Key

We’ve all seen it: somebody smiles radiantly and gets up to belt out their tune...badly. It's not that they don't have the pipes, it's that they don't have the ear to pull it off. Confidence fools a few in the crowd, but you know the sound is off.

AI does the same thing every day. It hits every note with conviction but sometimes in the wrong key. The difference between performance and precision is calibration.

🎵 Closing Notes

Confidence used to be a signal of mastery. Now it’s a filter effect applied to average outputs.

If we want trustworthy collaboration between people and AI, we’ll have to separate real signal from the noise floor of confidence.

Quick plug for Hubbard Decision Research's Team Calibrator. No affiliation, just a fan. Their calibration work shaped my own thinking on measurement, probability, and what “effective” really means. I also had Douglas Hubbard for my podcast a few years back, and the interview still holds up.

And of course, check out PAICE.work if you’re ready to calibrate AI collaboration for yourself or your team. Because those most confident in their AI skills might be the ones making downstream teams wince. An objective score unplugs their mic.

Until next time,

Sam Rogers

Chief Calibrationist

Snap Synapse – from AI promise to AI practice

📅 Book a meeting and let's turn overconfidence into accuracy.