Success at the PAICE of Work

Unveiling PAICE, a free tool to measure People+AI Collaboration Effectiveness

One strategic signal 🔭

One (human) prompt 🧠

One subtraction opportunity ➖

Created by Sam Rogers · Powered by Snap Synapse

Freely available on Substack, LinkedIn, and our mailing list.

New issue every Monday.

Yeah, lots happened in the last week (again): specifically over in Anthropic-land where they announced Skills, Web, MS365 integration, and Haiku 4.5 in the last 6 days while you weren't looking.

But this issue is all about one thing: our new release!

🔭 Signal: The PAICE Research Preview Is Live

Teams can’t answer the baseline question: How good are we at collaborating with AI?

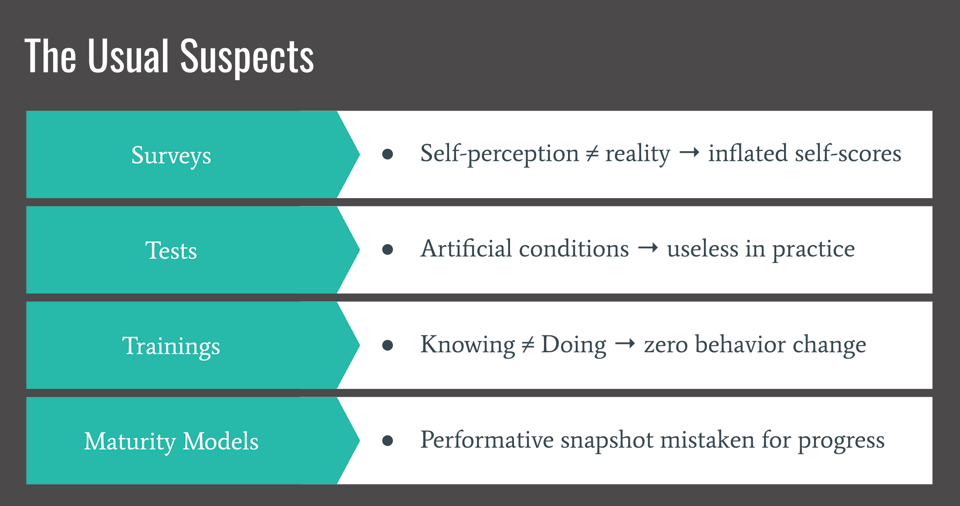

They can track adoption, spend, and pilot counts but how effectively people and systems actually work together? Not so much. Surveys and knowledge tests make poor proxies for this.

That’s why I built PAICE.

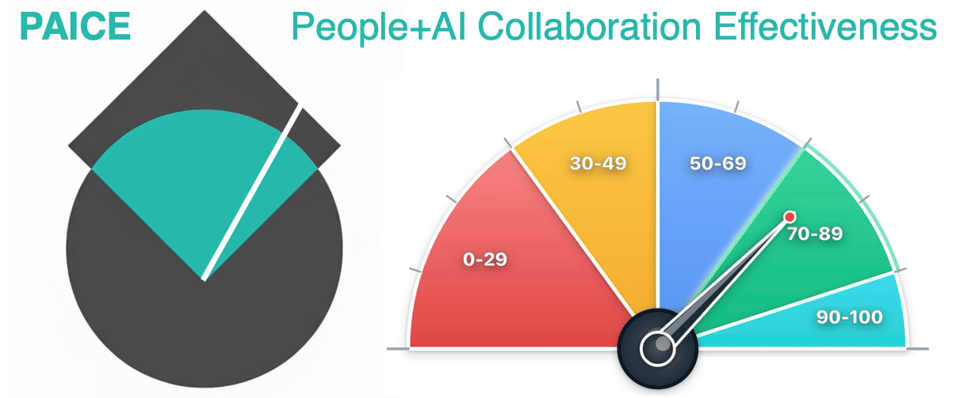

The PAICE framework (People + AI Collaboration Effectiveness) measures how humans and AI perform together. Trust, skill, and adaptability included. Think of it as a credit score for AI collaboration.

The Research Preview is now open at PAICE.work.

This limited release invites early participants to interact with AI via a regular chatbot experience, receive their own PAICE score, and contribute to refining the next phase of AI capability measurement. All for free.

🧠 Strategic (Human) Prompt:

If you could measure collaboration quality, not just output, what would you stop doing first?

How might this change your AI Transformation projects and tools rollouts?

➖ Strategic Subtraction:

Stop pretending that “using AI” equals being capable with AI. Consider putting the following in their place too:

Most frameworks define “responsible AI” as a compliance exercise.

PAICE defines it as a capability exercise.

Consider how to visibly reduce risk by giving the AI powertools only to those who have shown they can handle them safely. PAICE gives you a defensible way to prove who’s safe to scale.

🎸 Analogy of the Week: Your Favorite Band

AI is the rhythm section. The soloist can wail away all day, but without shared timing and an agreed key, it’s just noise.

Great musicians don’t try to control each other; they agree on the basics, then respond and play with everyone else in the band. PAICE scores the rhythm of that response, the interplay between human intention and machine adaptation.

🎵 Closing Notes

Every governance framework from NIST to ISO/IEC 42001 to California's new SB53 requires “demonstrated competency.” Yet no standard existed to show what that means. We all know that how well humans and AI actually work together matters. But we haven't had the measures to match.

PAICE changes that. It’s not another dashboard or vanity metric. It’s measurement infrastructure, the foundation for better adoption, safer deployment, and smarter governance.

Join the Research Preview, get your score, and help us build the future of measurable AI collaboration:

It's free right now to encourage your participation. Early contributors will help calibrate the baseline for future scoring tiers.

Until next time,

Sam Rogers

PAICE Setter

Snap Synapse – from AI promise to AI practice