From Warning Signs to Working Signals

Exploring how UX can solve AI governance issues, blurring lines of creativity & compliance, & rethinking invisible governance!

One signal 🔭

One prompt 🧠

One subtraction opportunity ➖

Created by Sam Rogers · Powered by Snap Synapse

🔭 Signal: Governance is Now a UX Problem

AI is blowing past the usual internal governance frameworks. Not because the rules are wrong, but because the interface to follow them is missing.

Employees are not so much ignoring policies. They more often just cannot tell when they are breaking them.

What I’m seeing:

Many orgs try to govern LLM usage like software licensing. But AI tools behave more like colleagues who can code.

You do not just “install” that. You collaborate with it.

And policies written for IT or Legal do not help employees navigate ethical use when the interface is a chatbox and the risk is downstream.

Why it matters:

If the path to good judgment is unclear:

Some will choose known and slow out of sheer fear. Safer, but not necessarily better.

Most will choose fast over safe. Especially under deadline pressure.

🧠 Strategic Human Prompt

How visible are your guardrails to someone just trying to do their job?

Or...

Where is the line between creativity and compliance getting blurrier than you would like?

➖ Suggested Subtraction

Cut one piece of essentially invisible governance.

If it requires memorization, reading a multi-page PDF, or digging through that cruft-laden intranet, then it ain't gonna happen. If the default is “ask Legal first,” that is not governance. It is wishful thinking.

Replace it with just‑in‑time clarity. Even one sentence embedded in the tool’s flow can make the right choice obvious.

Three clear examples:

Expense report policy “Refer to Finance Handbook section 4.3.2 for approved meal expense guidelines.” ➡ In the expense tool, next to the meal entry field: “Meals must be under $50/day unless pre‑approved.”

Customer data handling “See the Data Privacy PDF on the intranet.” ➡ In the CRM, when exporting: “You are exporting customer contact info. Only share with approved vendors.”

AI content review “Check our generative AI policy in the internal wiki.” ➡ In the chat tool, after generating content: “Before sharing externally, run this through our 3‑point fact check.”

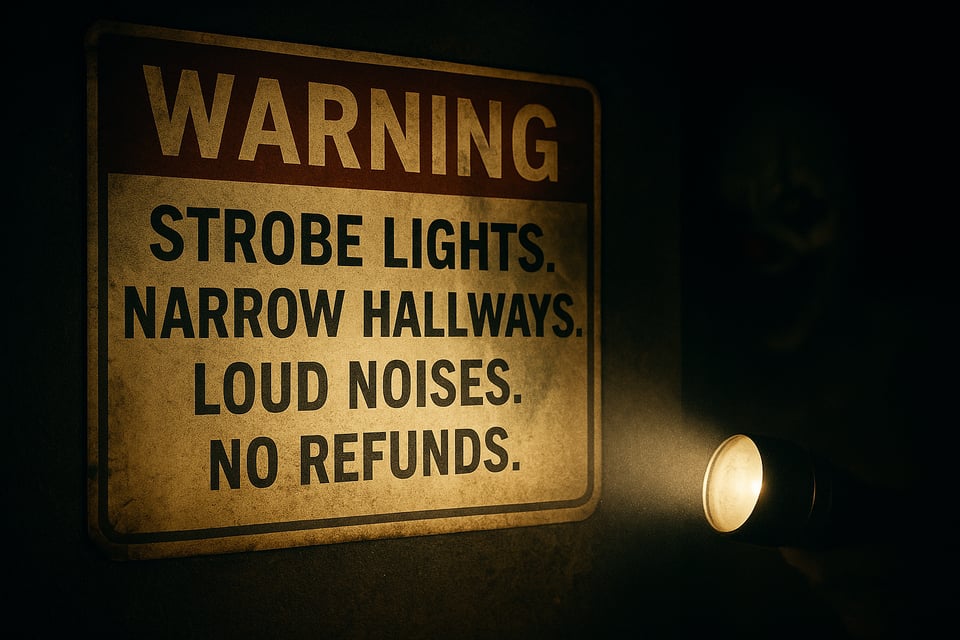

🤡 Analogy of the Week: Funhouse Sign

You're at a carnival funhouse.

Outside the door: “Warning: Strobe lights. Narrow hallways. Loud noises. No refunds.”

You nod, step inside, and...instantly you are plunged into chaos and evil clowns!

That is how most AI governance works.

Technically, you were warned. But once inside, the rules vanish. No clarity when you need it. Only guesswork, “vibes,” and the hope no scary clowns jump out.

Modern governance is not about static "here's yer sign" placards to cover legal risk. It is about signals that travel with the experience and guide behavior in the moment.

♬ Closing Notes

Why this matters for leaders (and why it is my focus)

Over 25 years of building learning and governance systems, I have seen things go very wrong, and then guided them toward what is both right and sustainable. Today, I help organizations redesign the “UX of compliance” so people can actually follow the rules in the flow of work. This is increasingly important as AI changes the game.

It is the same skill set I bring to transformation roles: translating complexity into actionable clown-free clarity. Whether you are a client or a collaborator, that is the work.

If you're interested in a Governance UX Audit for your organization, hit reply and let's talk.

Until next time,

Sam Rogers

Guardrail Guide

Snap Synapse – tools and thinking partners to fuel your AI transformation