Immature AI Maturity

Exploring the hurdles of measuring AI maturity and the pitfalls of misguided metrics.

🔭 Signal

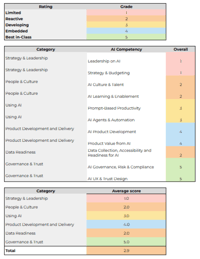

Everyone’s suddenly measuring AI maturity.

“I know! Let’s make a spreadsheet dashboard with color-coded crisp numbers, and the comforting illusion of control.”

Well, nobody says that last part out loud, so don’t look too close. Today’s maturity metrics are usually built on self-assessments, inflated usage data (hello, always-on AI notetakers), and goals invented mid–kickoff meeting. It’s like grading your own math test, then publishing the average as strategy.

Scores are simple.

Understanding rarely is.

Behavior? Oh "it's complicated"

AI adds an additional layer of unpredictability to each, turning every neat framework into an act of exploratory discovery.

But far too many of us still act as though collecting data is collecting insight.

When number go up but performance go down, it might be passable on the 2025 annual review. But it’s also how you wind up #opentowork by this time next year.

🧠 Strategic (Human) Prompt

You’re tracking “AI adoption,” right?

But what are you actually measuring: competence or compliance?

If the number rose this quarter, congratulations! But do you know why?

➖ Strategic Subtraction

Easy test to run this week:

Find a recent AI metric: adoption rate, accuracy score, usage percentage, etc.

Ask three people how it’s calculated and what it means

Did you get three different answers? If so, that metric isn’t measuring understanding at all. It’s measuring misunderstanding.

Subtraction opportunity: pause the dashboards until definitions are aligned. Rebuild from shared comprehension outward.

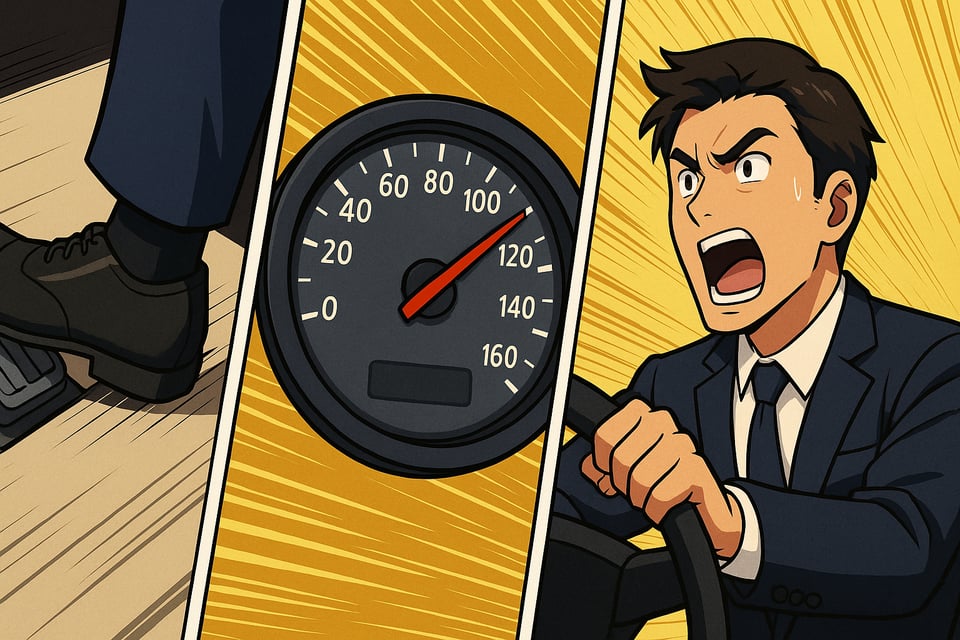

🏁 Analogy of the Week: Pedal to the Metal

Imagine teaching someone to drive using only a speedometer.

You could reward them for hitting top speed, but they might be going the wrong way down the freeway.

That’s what happens when we allow our organizations to chase performance metrics without situational awareness. We’re flooring it toward false confidence.

🎵 Closing Notes

AI exposes how fragile our measurement culture really is.

We’re trying to navigate new terrain with the same rulers that failed us before. Only now they’re wrapped in dashing dashboards with great gradients and the polish that conveys plausibility.

True maturity isn’t a higher score. It’s a smaller gap between what we believe we understand and what we can actually explain.

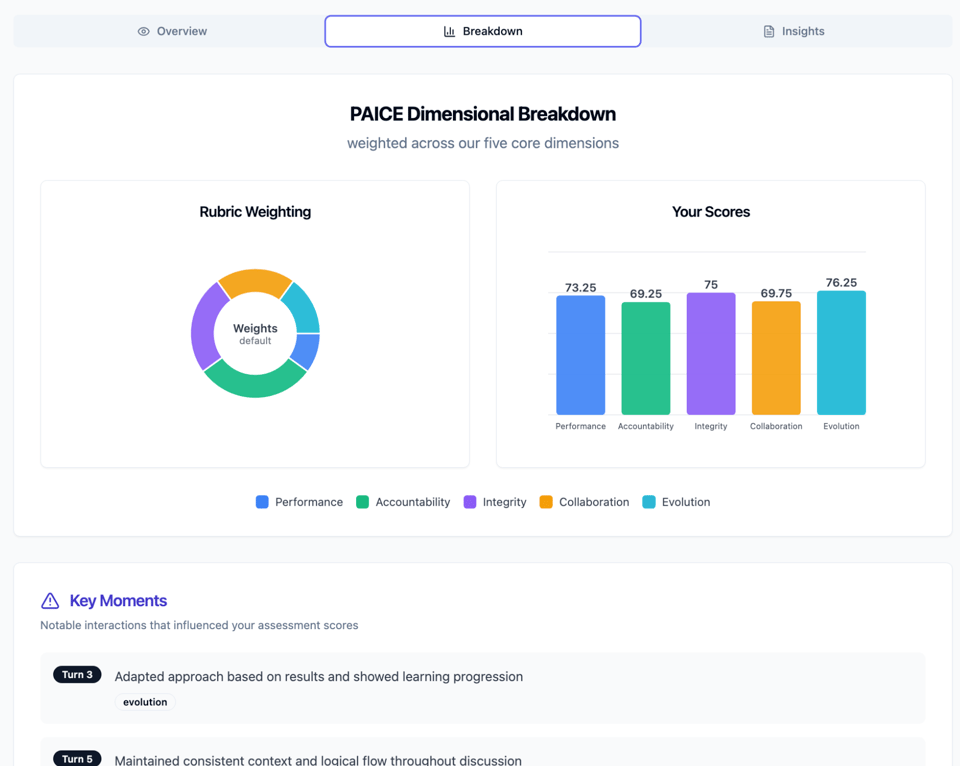

If you’re ready to move from scoring activity or belief to objectively assessing actual AI interaction behaviors, test your team’s AI collaboration capability with PAICE.work.

It's the first assessment that measures what matters: how humans and AI work together. It's in Research Preview mode, totally free and private today. Go ahead and test for yourself if our explanations need any explanation at all.

Until next time,

Sam Rogers

Maturity Skeptic

Snap Synapse – from AI promise to AI practice