Riffle Updates: Lightning Talk / Thoughtful Sync / Web Perf

In March we published an essay describing how we were building a music app where all the state (even the UI state!) was stored in SQLite, wrapped by a reactive state management layer. Here are some quick updates on the Riffle project since then!

Lightning talk

Nicholas gave a 10 minute talk about Riffle at the Have You Tried Rubbing a Database On It online conference organized by Jamie Brandon. It shows a few demo videos and code snippets that describe the essence of what we're trying to do with Riffle:

There were a bunch of other great talks at the conference so it's worth skimming the full program. Two that were particularly relevant to Riffle were:

- Your frontend needs a database by Nikita Prokopov

- DatalogUI: rubbing catalog on UIs by Marco Munizaga.

Thoughtful data sync

Our essay about Riffle shared some ideas for how to program UIs when the data is available locally. However, we intend Riffle to be a framework for local-first software, not local-only software. Even if data is primarily stored locally on client devices, there needs to be a way to synchronize state across devices as well. Sync is hard to get right; when you have multiple devices concurrently editing data, you need to ensure that things eventually converge to a reasonable state on all devices.

We recently published a paper at the PaPoC 2022 distributed systems workshop describing some of our ideas on this topic. In particular, we're interested in rich data constraints, like uniqueness and foreign key constraints, which are useful and familiar to app devs in centralized systems, but hard to preserve in most CRDT-based systems. They are also exactly the constraints that we'd need to build a CRDT-like relational database.

We propose an alternate sync model where you can preserve stronger invariants, in exchange for sometimes having conflicts that can't be automatically resolved. In those cases, we suggest creating forking histories, where users can see the multiple possible merge states, and manually manage them, sort of like a git branching model. The key difference from git is that we have a structured, formal way to detect and merge conflicts, rather than just diffing plaintext data. Our technique is based on using conflict sets from multi-version concurrency control to model dependencies between events.

Here's a link to the paper below. Sections 1-3 are hopefully quite readable and might be of interest to anyone thinking about how to model sync in local-first systems.

Merge what you can, fork what you can't: managing data integrity in local-first software

We got some good feedback from the workshop paper. It seems that some of our ideas are closely related to the AntidoteDB system and the Transactional Causal Consistency model, so we're investigating those.

Web performance / synchronous loading

We've been continuing to work with Johannes to develop his music app built on Riffle. That's been great for driving improvements in our library, including API design and correctness. But our main focus over the past couple months has been performance.

A lot of Electron apps these days are super slow to respond to user interaction, even if they have lots of data cached locally. For example, switching between playlists in Spotify often takes hundreds or even thousands of milliseconds to finish rendering the new playlist.

One of our goals with Riffle is to help keep UIs nice and snappy by asynchronously replicating data locally and keeping the interaction loop tight. This is a big part of what apps like Superhuman and Linear do to stay fast.

Here's a recent demo video of our music app. As you can see, switching playlists is a lot faster than the Spotify app.

w/ our music app we're targeting < 50ms from clicking a playlist to showing its contents. if the data is local, it should feel immediate.

— Geoffrey Litt (@geoffreylitt) May 4, 2022

(of course would like to do even better. seems tough with web/DOM though.) pic.twitter.com/f6VB2b8ysk

Of course, our app is far simpler than Spotify, so it's not an apples-to-apples comparison, but the general point still stands: if you go to the network on every interaction, you can't have a super fast app.

So, how fast should we be aiming for exactly? This is a tricky question when targeting the DOM. Kevin Lynagh's Finda app combines Rust and React to respond within 16ms to user interaction—it's very nicely architected, but also seems to benefit from having a simple UI. Superhuman targets < 50ms as their time budget, with a more complex UI. For now, we're aiming for 50ms as a target for our music app, since our DOM is complex enough that React VDOM diffing and CSS recalculation alone take more than one frame—but we'd like to get down to 16ms eventually.

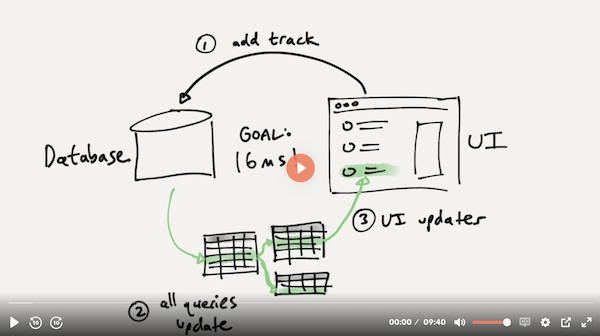

We've made an architectural change in Riffle to reach this performance goal. In our previous iteration of Riffle that we described in our essay, we were running SQLite outside the UI thread—either in a Web Worker for the browser, or a native process for a desktop app. This meant that on every interaction, we'd need to communicate dozens of times (once per query) between the UI thread and the SQLite process, serializing/copying query results back and forth, which turned out to be a major bottleneck in our architecture.

We've now rewritten the library to use an entirely different architecture. We make SQLite queries synchronously directly in the UI thread, against an in-memory database (as before, running SQLite compiled to WASM using SQL.js). We also keep a persistent SQLite database running in another thread, and we asynchronously replicate all writes to that persistent database. On boot, we populate the in-memory database from the persistent file on disk.

So far this has worked quite well; it makes the framework faster and simpler to reason about. Being able to synchronously fetch data means that we don't need to worry about loading/empty states for the initial render of a component; we can just load the data as we go. Previously we had to throttle writes occasionally, e.g. when responding to scroll events to power a virtualized list. Now we can send writes to the DB without throttling and it responds fine. Overall, this fits well into the Riffle philosophy of pushing more data-related operations into the database.

The obvious concern is: what happens if we block the UI thread with a slow query? So far this hasn't been a problem in practice. For Riffle to work well, all queries really should be completing in under a frame anyway, regardless of where they're running. Put another way: in a normal frontend, there's a bunch of data that gets manipulated by custom JavaScript: in Riffle, we just do that in SQLite, which should be at least as fast.

However, we'll probably eventually need to come up with some patterns for moving slow queries off the UI thread and make them asynchronous. Another concern is memory usage, since this means all data for the app needs to live in memory. We also need to think more about edge cases that might occur in async replication, which is probably related to the general problem of multi-device synchronization.

Let us know if you have any thoughts/feedback and we'd love to discuss further!

Until next time,

Nicholas and Geoffrey