Edition 10: So much went wrong with the Epstein File release

"Jan addresses the chaotic release of the Epstein Files and the ensuing complications from a data perspective."

Hi!

This week it is Jan again, talking a little bit about how I perceived the release of the so-called Epstein Files and the problems that arise from it.

Before we jump in, one thing needs to be clear: this post focuses on the logistics of the release. From a data hoarder’s perspective, they are chaotic and painful. But that technical frustration is secondary to the much bigger issue: that there still appears to be no meaningful accountability for what was done to girls and young women. Watching this unfold in public is horrifying. Nothing about broken download links outweighs that.

That said, let's talk about what makes the release weird.

The Disaster that was the Epstein Files release

On January 30, 2026, the Department of Justice published what now seems to be the last ever batch of the so-called Epstein Files. It was an enormous amount of data, in the press conference the DOJ said they had released 3.5 million pages, alongside hours of video material (after allegedly sifting through a total of more than 6 million pages). The basis of this release was the Epstein Files Transparency Act, which was passed in November and forced a very tight timeline on the DOJ for the release.

I have no idea whether these figures are correct, nor do I know how they came up with the numbers (who counts pages?), but none of that mattered: The files were released, the DOJ had published beautiful direct download links to full archives of release 9, 10 and 11 (Dataset 12 was released at around 1am EST on Saturday morning, poor person who had to work on this), totaling more than 250 gigabyte of data. Together with providing links for batch downloads, it was also possible to search the document content on the website directly, and browse individual files.

And this is were the problems began.

Structurally unpublic data

Problem number 1: The links did not work. Specifically the link to the largest dataset, number 9, which weighed 180 gigabyte, seemed to be hosted on a server that would cut the connection after a while: Sometimes after 10 gigabytes, sometimes after 50, sometimes right from the start. I can't say whether this was deliberately designed like this, or the servers they used was simply overwhelmed, but what was clear was that the data was not accessible the way it was publicly announced before. The same problems, although to a lesser degree, occurred for datasets 10 and 11, which I eventually managed to get full copies of after numerous retries.

Throughout the weekend, people on reddit compared notes on what they were able to grab, compiled lists of files and archive content, and tried to puzzle together the datasets 9, 10 and 11 from fragments. While all of this was happening, links to individual documents kept disappearing from the official government website, fueling social media speculations about a cover up.

By Monday, both 404 Media and the New York Times had reported that the DOJ had released files containing unredacted nude images and investigative notes referencing “possible CSAM”, which is short for child sexual abuse material. Shortly afterward, the archive download links vanished entirely.

The infinite loop

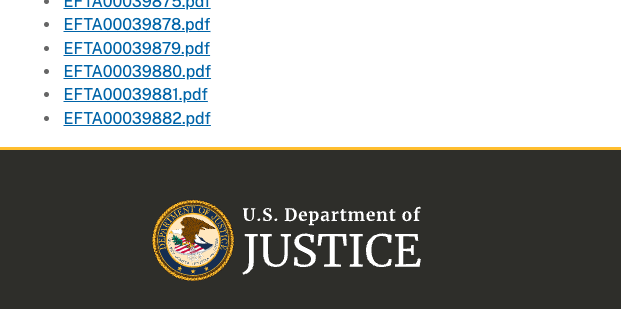

Problem number 2: I don't recall if it was Sunday or Monday when I realized that the pagination for the individual files was acting weird. Since I still wasn't able to acquire a complete copy of the dataset 9 archive, I had resorted to scraping individual files from the website, one page after another. But my scraper would hit the same files repeatedly, over and over again. Sometimes the files repeated after 10 pages, sometimes after 100. The files listed on these pages were the same as on page one.

It looked liked someone deliberately programmed the website in a way that it would, apparently at random intervals, wrap around and start from the beginning, while never returning the full set of documents in one go. After further investigation I also realized that the site would generate a (random?) file list for any page number I attached to the url as a parameter. It's a common way of transferring information between the user and the server: You have a base url, in this case "https://www.justice.gov/epstein/doj-disclosures/data-set-9-files" and you append parameters like "?page=1" or "?page=999" to navigate to (and then scrape) a specific website.

Normally, if you request ?page=999 on a site with only 20 pages, the server returns an error. Here, it kept generating output. That suggests the page parameter was not properly bounded. Technically, it looked like the backend may have been handling the value as an unsigned 32-bit integer without enforcing upper limits. In practical terms, that meant there was no reliable way to determine the actual end of the dataset via pagination.

My colleague Simon ended up writing a scraper that started on a randomly seeded page number and iterated over the pages until the same files came up again, only to then jump to a new random starting page, and so on.

At the time of writing this, the pagination is gone completely: Dataset 9, which was once announced as a 180 Gigabyte behemoth of an evidence collection, right now on the official website consists of 50 PDF files.

Redactions that did not redact

Problem number 3: This is not something I discovered, but it's symbolic for how these files have been prepared before the release. We have already learned a few days after the initial publication that the DOJ did not redact all victim information and photos. But what's even worse is that some of the parts that seemed properly redacted were, in fact, not.

The more obvious ones were the cases where the text was essentially just overlaid with a black box - users could still highlight and copy paste it. Imagine black text on black background in, let's say, Microsoft Word: If you select it with your mouse and copy it somewhere else, you will be able to read it. Yes, it was really so simple.

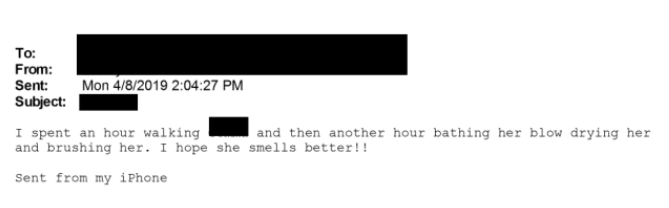

In all fairness, from what I have seen this has only happened in the first few releases and not in the latest batch. However, something way more interesting occurred when the DOJ tried to redact information from (printed) emails. To understand it, you need a bit of context about how email actually works. If you already know your way around MIME headers and content-transfer encodings, feel free to skip ahead. If not, here’s the simplified version.

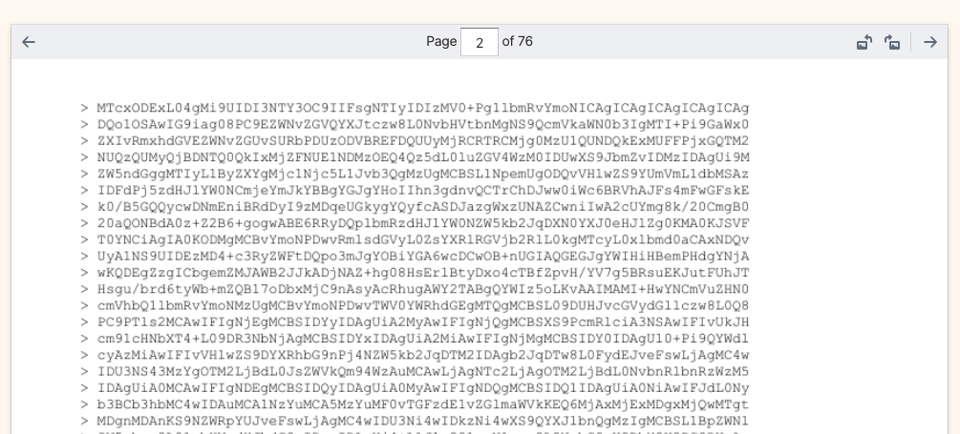

An email is not just the text you see between a subject line and a signature. Under the hood, it consists of structured headers, a body, and often one or more attachments. Because email was originally designed to transport plain text, binary data like PDFs or images can’t just be sent directly. Instead, attachments are encoded into a text-safe representation (usually Base64). Sometimes the body itself uses something called quoted-printable encoding, which is where those trailing equals signs come from that people noticed in the release.

When you open an email in a client, the client automatically decodes these encoded blocks back into their original binary form so you see a normal PDF or image, or whatever was attached. But what happens when you press “print”? That depends entirely on the client and configuration. In some implementations, instead of printing the decoded attachment, the client prints the raw encoded attachment data: the Base64 or quoted-printable text that represents the file before decoding. And that appears to be what happened in parts of the DOJ release.

In practical terms, that means that what looks like dozens of pages of gibberish in the released PDFs are actually the raw attachments. Printed in encoded form, scanned, and then published as, in some cases, dozens of pages of base64 encoded file content.

The security researcher Mahmud Al-Qudsi wrote a fantastic blog post about it and managed to decode at least one attachment this way that was not published separately (spoiler: It is boring). Another huge shout-out at this point goes to my colleague Alex, who went down into email parsing hell over the past few weeks (unrelated to the Epstein Files) and is the most knowledgable person I can imagine when it comes to the quirks of electronic letter sending. If you're interested in this kind of stuff, watch out for the blog post coming soon at dataresearchcenter.org/blog, sorry for the shameless plug.

Conclusion

As Werner Herzog famously said about a misguided penguin: But why?

There's so much that just looks weird from the outside that it is hard to believe that all of these things are coincidences. And we did not even talk about some aspects of weirdness, like when one researcher at the PDF Association identified technical indicators suggesting that some files were digitally generated and then altered to resemble scanned paper documents by adding artificial noise layers. Whether that was done for workflow reasons or presentation is unclear. But it adds to the general sense that the dataset was heavily processed before release, while overlooking crucial problems.

Obviously, I have no insight into decision making at the DOJ. I saw some commentators online speculating that this was all part of the general "flood the zone with shit" strategy. This argument I find hard to follow, given that the Epstein files are a very sensitive topic with the Maga base and given all of the above, this is not how one stops speculations and conspiracy theories.

Personally I find it very hard to wrap my head around it. But what's clear is that this is a horrible way of making data public.