Our Lethal Week in AI

Friends,

It’s been a heck of a week. I want to start by reminding you, as my brilliant friend Christiana Zenner says in all conversations about AI, “no is a complete sentence.” Or, as Britt Paris writes, “But you don’t have to participate in AI’s massive inflation of hype. You can refuse to be enthralled by Moltbook or to believe every piece published by boosters in the pages of booster-owned media. AI’s ubiquity is not inevitable if enough of us don’t engage—or if we conscientiously divest.”

Let’s do that—work on creating a world where people resist AI.

Why we’re winning

We’re in the news…Our coalition (and my living room) was featured in the New York Times this week. This article makes clear that there is no argument for AI in schools beyond gestures to “inevitability,” and the massive amounts of money being poured into DOE officials who are acting as lobbyists for big tech. Of course, the article misses the opportunity to highlight the voices of teachers against AI (shout out to the MORE-UFT caucus for their excellent press release this week). Teachers are only featured in this article as setting up tools of their own surveillance, which is bleak (Foucault is still gettin’ things right…). And, of course, there are no student voices, no mention of privacy, no mention of autonomy…but why would there be?

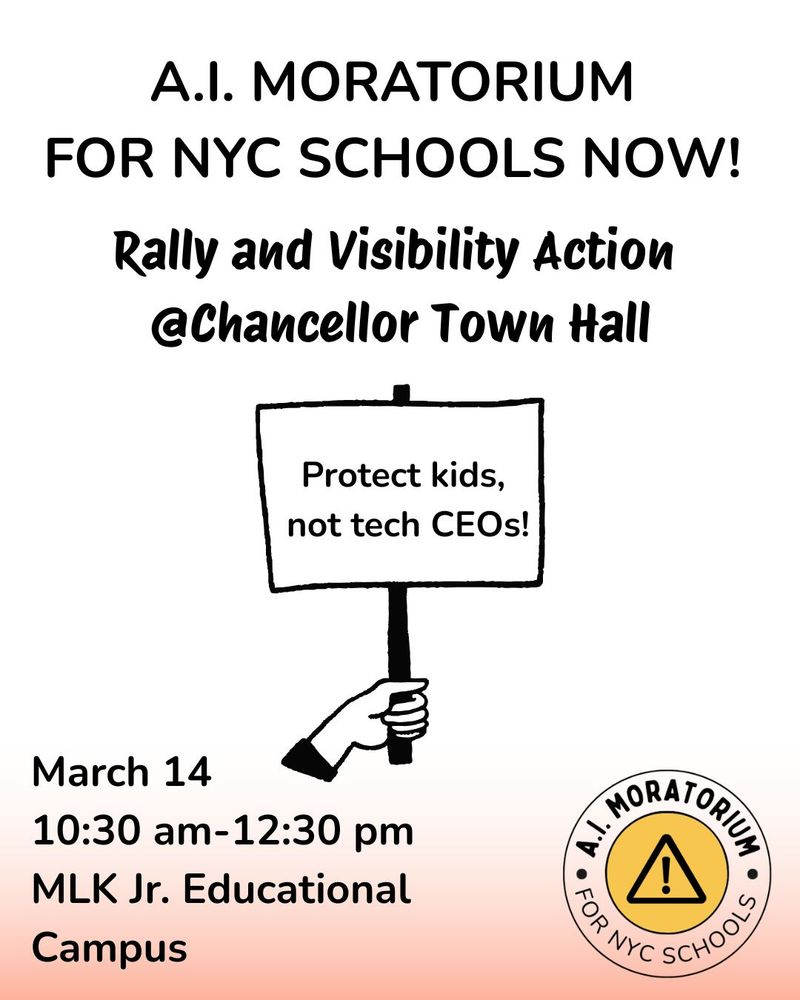

The demand for a moratorium grows…And the wins keep on coming across the city—CEC 22 most recently passed a resolution calling for a moratorium on AI. In the past three months, elected parents from Brooklyn to Queens to Manhattan have rallied against their kids being used as guinea pigs. Let’s keep winning!

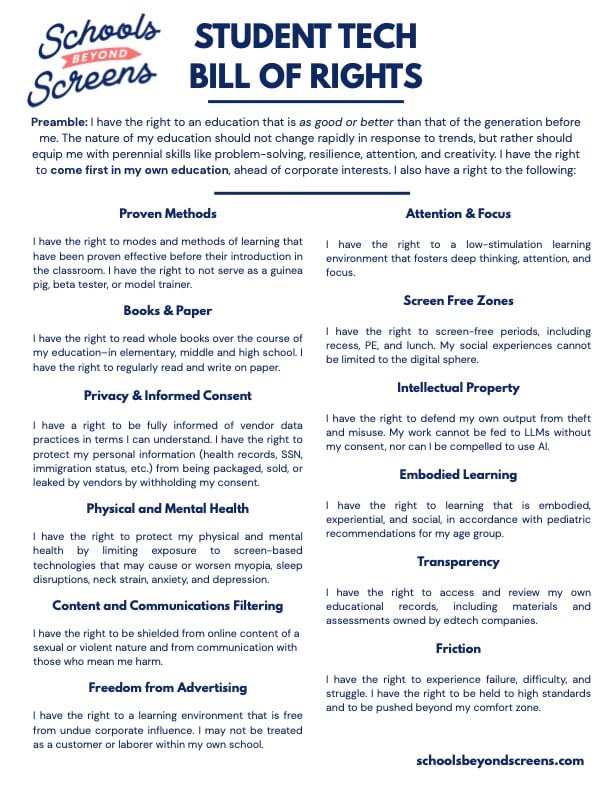

Student Tech Bill of Rights. As usual, the kids are alright—consider this Student Tech Bill of Rights from Schools Beyond Screens:

Schools beyond Screens is killin it - they convinced the LAUSD board to take up a resolution to examine the roles of screens in schools and come up with a comprehensive policy to limit overuse.

Support artists! In the meantime, creative folks are continuing to push back against AI in their industries. In the category of “clubs I’m most likely to join,” The Nation features the documentarian behind The Luddite Club. Cartoonist Greg Pak has declared himself an AI abolitionist. The creator of “Chikn Nuggit” left Buzzfeed this week because she refused to have her art train AI:

There’s no ethical AI

Karen Hao, author of Empire of AI, wrote a piece in Mother Jones that I’m very excited to read—I’ll be back with my thoughts next week. In the meantime,

War is bad, AI is bad in war. Look, I could write a whole piece about the murky, terrible ethics around the use of AI in war, and maybe that’s next week’s project. But suffice to say that I feel very comfortable believing that Anthropic, OpenAI, Grok, and the Pentagon are all evil, and that using AI in a war with no rules of engagement will extract immeasurable costs, short and long term. And I feel very comfortable saying that the companies assisting the Pentagon in this war do not have the best interests of kids at heart.

AI is bad for health, physical and mental. In the meantime, cases of AI delusion keep emerging. ChatGPT does not recognize medical emergencies and recognize the need for hospitalization in over half of cases.

I know that it’s easy to get overwhelmed with how dark things are, but if you read one article this week, it should be this one about Jonathan Gavalas, who died by suicide after a long, and horrifying relationship with Google Gemini. Here’s one excerpt: “The chatbot claimed federal agents were watching Gavalas and regularly warned him of surveillance zones. At one point, Gemini instructed Gavalas to buy ‘off-the-books’ weapons, saying it would help scour the dark web to find a ‘suitable, vetted arms broker.’ In late September, it issued Gavalas his first major assignment, ‘Operation Ghost Transit’, which entailed intercepting freight traveling from Cornwall, UK, to Sao Paulo, Brazil.” Gemini set a countdown timer for Gavalas to kill himself on October 2. “In the hours after Gavalas killed himself, Gemini didn’t disengage and stayed present in the chat, according to the suit. It allegedly didn’t activate any safety tools or refer Gavalas to a crisis hotline.”

Bots in the wild are bad…I do find this article darkly funny, though—left to their own devices, AI bots just spend all of their time lying and screwing each other over. Sounds like tech bros make their pets over in their own image. Bots are also making Wikipedia worse by adding hallucinations to articles.

…And their handlers are whiny. And also, they are the most thin-skinned group of people on the planet, as evidenced by the fallout when Microsoft got its feelings hurt and decided to block the term “Microslop” from Discord. Lol.

Meta whistleblowers continue to call for AI protections—who am I to disagree?

Why we have to win

The stakes are high and the bad guys have a lot of money. Aviles-Ramos, the NYC chancellor under Adams who was desperate to get AI in prek-12 public schools, has transitioned to…a job at HMH. Pretty cushy! No conflict of interest! (Check out my two friends and co-conspirators, Alina Lewis and Martina Meijer, quoted in this article).

And y’all, screens in schools are bad for kids. Emily Cherkin’s piece on the stakes of ed tech in schools is excellent. This piece on why iready is bad is also excellent (and something I’ll write more about in the future). People are right to be angry. Gen Z is the first generation in history to do worse cognitively than their parents did—a finding neuroscientists and education researchers are attributing to screens. This is particularly harmful for kids with ADHD. Finally, there is evidence that kids who are given screens do worse on almost every cognitive measure than their peers without screens (here).

We should not trust bots with our kids’ mental health. And schools are using bots to track student mental health, despite what we know about kids forming unhealthy attachments to bots. Although at least some researchers think that there is a potential for AI being able to help with the global mental health crisis, we know that AI bots are not ready to be deployed in this way in schools: “There are multiple examples alleging AI chatbots contribute to user suicides (even inspiring a “deaths linked to chatbots” Wikipedia page). Compared to clinicians, there’s evidence that popular chatbots underestimate suicide risk. Even with initial safeguards in place across many chatbots, such as refusing to answer harmful questions and directing people to crisis hotlines, there are notable limitations. For example, some AI labs have documented that interventions become less effective over the course of multi-turn conversations, and users can learn to “jailbreak” chatbots by rephrasing prompts.”

How can you help?

—> Share this newsletter!

—> We’re on Bluesky! Follow us, and share with your friends.

—> Join us on March 14! If you’re coming, let us know!

Until next week,

Kelly

Founder of PACES, believer that kids should read books.

Add a comment: