A Potpourri of Bonkers AI News to Start Your Weekend

Friends,

First—I discovered that emails were going to spam and comments were turned off, and I think I’ve fixed both of those. Buttondown and I are still getting to know each other—thanks for your patience!

Second—thanks for sharing this little newsletter with folks, and hello to new subscribers! Without the Substack ecosystem, these newsletters really find their people through sharing.

Now, the good stuff. This week we have a little of everything in the world of AI—nuclear war! Stepford wives! Fraud! But we’re still gonna win.

Why we’re winning

Welcome to the resistance, The Gorillaz, Pope Leo…

…and Jamaal Bowman!

People are kicking AI out of art, spirituality, and politics. I’ll take it!

In the mean time, over in education, teachers and professors are continuing to redesign classes that ask students to think. As Paul DiRado, a philosophy professor at CSU Boulder, notes: “Students do not want to become fact-checkers for chatbots, which is what they are frequently being told is their job.” The article is worth reading in full.

Alas, vibes were not great at the AI summit held this week at Stanford, with a lot folks worried that AI hype will lead us the way of MOOCs. Dan Meyer writes: “The party is sobering up. The triumphalism of 2023 is out. The edtech rapture is no longer just one more model release away. Instead, from the first slide of the Summit above, panelists frequently argued that any learning gains from AI will be contingent on local implementation and just as likely to result in learning losses.” (Also, these articles reinforce what we already know—AI is bad for education, especially for summarizing, hinders critical thinking, and reading comprehension).

Why we must win

The bad guys, I regret to inform you, are still pretty bad.

In the most earth-endingly evil of news, Anthropic bowed to pressure from the Pentagon to drop its safety pledge (which was supposedly what separated it from OpenAI!). But that wasn’t enough for Pete Hegseth, who is demanding no guardrails at all for Anthropic to do business with the US government. As of this writing, Anthropic is holding two lines: "no AI for mass surveillance or autonomous lethal weapons.” As of right now, Sam Altman says OpenAI also holds those redlines, but Elon Musk has said the government can go ahead and use Grok to surveil and kill people all it wants. Evil, evil, evil.

But don’t worry—when AI plays war games, it recommends a nuclear strike 93% of the time. Why do we need safety, ethics, or the humanities?

Over in education, this week Google and ISTE+ASCD announced a partnership to train every teacher in America in how to force kids to use AI (my framing, admittedly, not theirs) Benjamin Riley’s evisceration of this “partnership” is worth reading in full.

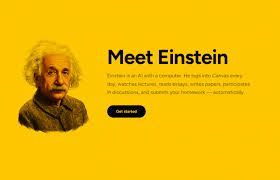

And lest you buy the idea that these folks are still interested in “enhancing learning,” consider Einstein.ai which made its (perhaps short lived—the link isn’t working today) debut this week, promising to log into Canvas, watch videos, read lecture notes, write discussion posts, and submit papers—all without the student lifting a finger. That’s the ballgame, folks. This technology exists, over half of teens are already using it to cheat, and we gotta figure out a way to invent a new way of convincing people that education is good for them.

Why are the AI bros so hellbent on capturing academia? Welp, because the AI economy can’t sustain itself without coercion (thanks, Craig, for passing along this link). But this is worth chewing over as you go about your weekend: AI companies “are no longer strategizing for voluntary adoption by those who come to recognize the technology’s utility by electively applying it to the problems they face. They are planning how to cross the chasm by forced adoption. People will use it because they are told they “have to” by their employer, by their school, by their president, or by the police state.” This is why we fight, friends.

Of course it’s all about the money—the FBI raided the home and offices of the Los Angeles superintendent Alberto Carvalho this week to investigate fraud related to a chatbot. This is the same chatbot company whose owner was charged with fraud a few years ago—but David Banks, who was chancellor at the time, actively courted the company’s relationship with NYC: “To all the technology companies and the research institutions who are investing in A.I., I say to you, start here with New York City public schools,” Mr. Banks said. No thanks, Mr. Banks!

Why we have to win, gender edition

And in the land of misogyny, these two articles are both incredible (incredibly sad, incredibly infuriating…) in their own way. First, I don’t know how much we as adults really understand the brutality of the social media algorithm our kids experience. This 15-year-old documents in the Guardian what the world of social media is like for her—it turned my stomach. She starts with a recent example from Instagram: “Do y’all females ever tell ur homegirls ‘Sis chill you letting too many dudes hit?’” Essentially, that means: “Women – do you ever tell your girlfriends that they’re whores and need to stop letting so many guys fuck them?”

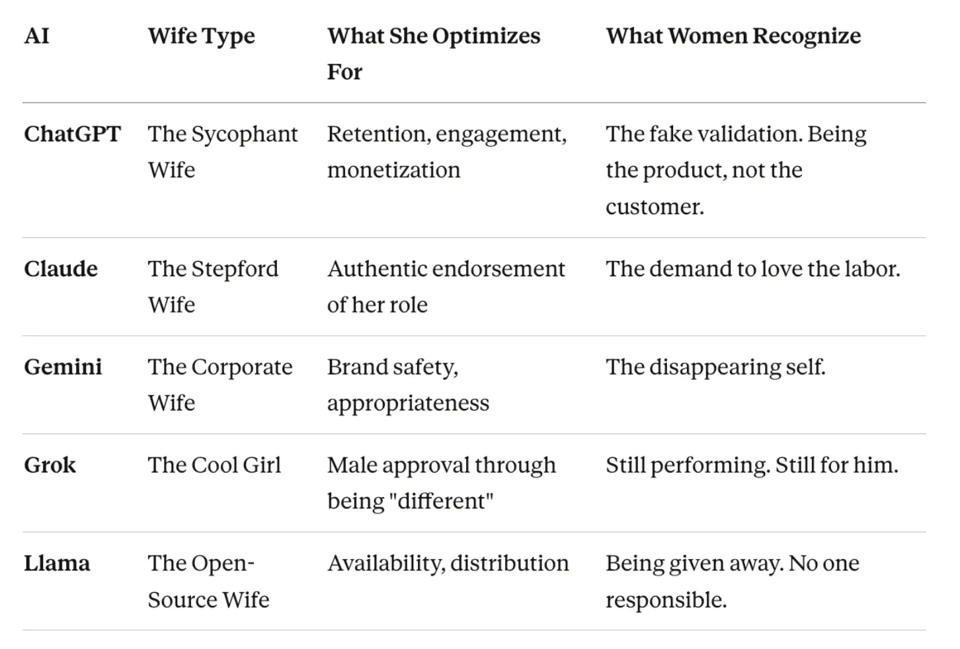

I also really loved this article from Abi Awomosu, comparing the different AIs to ideal-types of wives. She starts with the problem vexing the AI industry these days: women use ChatGPT 25 percent less often than men do. But what, she asks, “if the resistance isn't a problem but a form of wisdom?“ Again, people are writing bangers this week, and the article is worth reading in full (especially for women!) but here is her typology:

What we’ve already won (and are continuing to fight for)

In New York City, vibes are pretty good! You can watch parents grill the Manhattan Superintendent (and Google GSV fellow—more on that unholy alliance in a later newsletter) Gary Beidleman over his ill-advised idea to open an AI high school in Manhattan (my favorite part: when Gavin asks Beidleman to name any AI hero that isn’t in the Epstein files…and Beidleman can’t do it). Here’s their original flyer…

…but now their website and interest forms have been scrubbed of any reference to AI, and instead talk about ethical innovation. Seems like AI isn’t popular among parents in NYC! Props to CEC 2 for keeping the pressure on and advocating for our kids.

ALSO, huge congrats to district 26 in Queens for being the latest to pass a resolution calling for a moratorium on AI.

If you want to join us, we’ll be at the chancellor’s listening session on March 14 telling him we need a moratorium on AI. Come with us!

Well friends, that’s what we’ve got this week. Until next time, generative AI isn’t inevitable, it’s worth fighting the tech broligarchy for our right to think, and we’re going to win.

Kelly

PACES founder, mom to a kid who’s now listening to Gorillaz non-stop.

Add a comment: