Team Newsletter: From Tool Training to Process Thinking, A Spiral Model for AI in Higher Education

This month’s team newsletter highlights work led by researchers Jesús Alfonso López, Carlos Peláez, Andrés Solano, and Paola Andrea Castillo Beltrán at Universidad Autónoma de Occidente (Cali, Colombia).

Through their research on AI integration in higher education, the team has developed a structured, repeatable model that helps educators move beyond tool familiarity toward designing meaningful, AI-mediated learning experiences.

This work reflects a core idea we emphasize across the MLSysBook community: building AI capability is not about tools alone, but about systems, process, and pedagogy.

Every university is training faculty on AI. Almost none of them have a repeatable process for turning that training into lasting curricular change. A research group in Colombia built one.

At our university, AI training for faculty started the way it starts almost everywhere. We organized workshops on ChatGPT, Gemini, NotebookLM. Professors attended. They learned how to write prompts, explored a few interfaces, and left with new bookmarks. Then the semester resumed and very little changed in how courses were actually designed or taught.

The training was not bad. It was incomplete. It gave educators familiarity with tools but no systematic way to think about when, why, or how to integrate those tools into their teaching practice. The tool became the destination rather than a means to a pedagogical end.

Our research group recognized this gap early, and it pushed us to rethink the entire approach. As part of an ongoing educational research project, we have pivoted from teaching faculty about tools to giving them a repeatable process for AI integration. The question we are trying to answer is not "which AI tool should I use?" but rather "how do I design a learning experience where AI plays a meaningful role?"

The Spiral Model

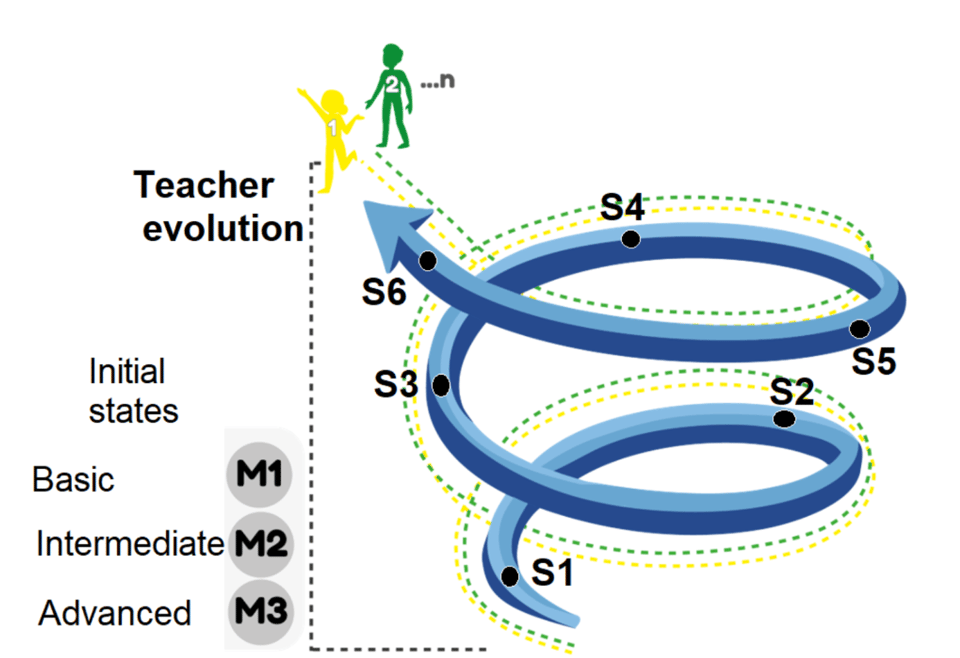

The core idea behind our model is that AI integration is not a one-time event. It is an iterative design practice. We designed the model as a spiral that professors move through repeatedly, deepening their skill and expanding their ambition with each cycle. To support this journey, we have also developed specialized AI assistants that guide faculty through each phase, so the process itself is AI-mediated.

Faculty enter the spiral at one of three levels depending on their experience: Basic (M1), Intermediate (M2), or Advanced (M3). From there, six stages unfold.

S1: Self-Diagnosis. The journey begins with an internal audit. The professor engages in an individual, objective, and confidential exercise to assess their current level of experience and knowledge regarding AI-mediated technologies. This is not merely a technical checklist; it is a reflection on how they currently apply these tools within their specific academic activities. By establishing this baseline, the teacher can define a personalized formative trajectory. Most faculty training assumes a uniform starting level. Ours does not. A professor who has been experimenting with AI for two years and one who has never touched it enter at different points and follow different trajectories. This alone eliminates a significant source of frustration and disengagement.

S2: Exploration of AI-Based Technologies. Once the baseline is established, the educator enters the exploration phase. Guided by the results of their self-diagnosis, the professor familiarizes themselves with tools, platforms, and applications that hold educational potential. The focus extends beyond "how to click" to a deeper understanding of functionality, benefits, limitations, and ethical implications. By examining academic use cases, teachers broaden their vision of what is possible, ultimately selecting the technologies that align most closely with their pedagogical objectives and unique classroom context.

S3: Design of the AI-Mediated Learning Experience. This is the heart of the pedagogical shift, and the stage that most tool-first training skips entirely. The professor plans a concrete learning experience where AI plays an active and meaningful role. Rather than superficial use, the educator defines clear learning objectives and selects AI technologies that directly support the competencies to be developed. This phase involves designing interactive activities, digital resources, rubrics, and feedback mechanisms. The goal is to ensure that the integration is pedagogically justified, student-centered, and tailored to the cohort's specific needs. We insist that design precede tool selection, not the other way around.

S4: Readiness and Preparation. Before entering the classroom, the experience must be "staged." This phase involves a triad of considerations: technical (configuring tools, ensuring access, and checking connectivity), pedagogical (planning the nature of accompaniment and feedback), and emotional (building teacher confidence and student motivation). The professor also prepares the monitoring mechanisms needed to observe how the experience unfolds in real time. This preparation bridges the distance between a good plan on paper and what actually happens in a room full of students.

S5: Deployment and Academic Management. At this stage, the plan is put into action. The professor guides students through their interaction with AI tools, documenting the process as it happens. Management goes beyond the classroom walls; it involves articulating the experience within the broader curriculum and the student's formative path. We encourage collaborative work among peers during this phase, promoting the progressive integration of AI into broader academic management tasks such as planning and learning tracking. The professor's role shifts here to that of a facilitator and an ethnographer of their own practice.

S6: Teacher-Student Evaluation. The final stage of the cycle is a comprehensive evaluation from both perspectives. Educators and students jointly analyze achievement indicators, perceived usability, and the actual impact on learning. By identifying challenges and lessons learned, this critical reflection provides the data necessary to adjust the model for future iterations. This is what closes the loop: a single classroom activity becomes a source of institutional knowledge that feeds back into the spiral, making the next cycle more effective. Over time, a department or university accumulates real evidence about what works, for which students, and under what conditions.

Figure 1. Process Model For The Appropriation Of Artificial Intelligence In Teacher Training at Higher Education Institutions. Faculty enter at their assessed maturity level (M1, M2, or M3) and cycle through six stages, with each iteration building on the last.

Why We Think This Matters Beyond Our University

Most conversations about AI in education stay at the level of "which tools should we use?" That question has a short shelf life. Tools change every few months. What does not change is the need for a structured way to think about integration.

Our spiral model reframes the problem. Instead of asking professors to keep up with an endless stream of new platforms, it gives them a repeatable process: assess where you are, explore what is available, design around learning objectives, prepare your environment, deploy and document, then evaluate and improve. That sequence works whether the tool is ChatGPT, something that does not exist yet, or something built in-house.

We believe this approach is transferable. You do not need to adopt the full model to benefit from it. Even pulling out one principle, like starting every integration effort with an honest self-diagnosis, or insisting that design precede tool selection, can shift how an institution approaches AI in the classroom.

By adopting this kind of systematic process, university faculty move away from being passive users of technology and become architects of AI-enhanced learning environments. That is the shift we are trying to enable: AI serving education, rather than education serving the tool.

We welcome collaboration and feedback from educators and institutions working on similar challenges. You can reach us through Universidad Autónoma de Occidente.

Further Reading

OECD Digital Education Outlook 2026: Exploring Effective Uses of Generative AI in Education

UNESCO: What You Need to Know About the New AI Competency Frameworks for Students and Teachers

UNESCO: Generation AI, Navigating the Opportunities and Risks of AI in Education

EU: Empowering Learners for the Age of AI, Draft AI Literacy Framework and Stakeholder Consultations