The Roll Up #001

2026-04-15

Read on the web: https://rollup.hackidle.com/issues/2026-04-14-issue-001/

My Projects This Week

- prowler. Extending FedRAMP 20x KSI coverage to Kubernetes and Microsoft 365 on top of the Low baseline work from PR #9198, wiring NIST 800-53 control mappings into the KSI framework files I'm touching, bumping the framework files to FRMR v0.9.42-beta, and fixing the dashboard modules for the new indicator IDs. Official PR coming soon. Demo below.

Demo: FedRAMP 20x KSI coverage for Kubernetes and M365 landing in Prowler

- scf-api. Public launch of a static JSON API serving the Secure Controls Framework's 1,468 controls, 33 families, and 249 framework crosswalks, with automated updates from official SCF releases. All the content is the SCF's; I'm just making it agent-friendly. Built in the shape of Vercel Academy's Agent-Friendly APIs guide:

llms.txt, a markdown docs endpoint, machine-readable at every layer. This is the data backbone behind the next phase of myctrl.tools research. - myctrl.tools. Grok support + UX work. Added Grok as a provider. Tightened left-side navigation and mobile scrolling. Added the OWASP Smart Contract Top 10 as a risk list. Notes on controls can now be bucketed by project or assessment, so you keep separate threads per engagement. Demo below.

Demo: Early look at the gap-assessment feature I'm building inside myctrl.tools

- fedramp-browser. Moved into hackIDLE this week (was

fedramp-tui). A TUI for the official FedRAMP docs corpus. Renamed so it doesn't get confused with anything fedramp official. Just a golang cli based experimental project. Demo below.

Demo: Navigating the FedRAMP docs corpus in the fedramp-browser TUI

- grclanker. Also moved into hackIDLE this week. An experiment that started as me dictating specs for some of the top services on the FedRAMP Marketplace into Wispr Flow between sets at the gym. Early look below.

grclanker: Terminal-first UI for the GRC-spec experiment

- fedramp-docs-mcp. Older MCP server, still maintained. Pairs with the fedramp-browser rename this week. Exposes the FedRAMP docs through the Model Context Protocol so Claude and other LLM clients can answer FedRAMP questions from the source of truth instead of relying on model memory. I've wired in some opinionated elements too. More coming. Demo below.

Demo: Claude answering FedRAMP questions from the source of truth via fedramp-docs-mcp

- rollup.hackidle.com. The site you're on. Astro + Buttondown + satori-rendered OG cards + a new original track every Tuesday. Source at hackIDLE/rollup.

- scoop-bucket + homebrew-tap. Package distribution for the tools I'll start shipping under the hackIDLE name.

- nist-cmvp-api. Scaffolding for a cryptographic-module-validation lookup tool that's been on my list for months (it's a part of myctrl.tools now)

Research Notes

AI Assistance Reduces Persistence and Hurts Independent Performance

AI · RESEARCH · Grace Liu, Brian Christian, Tsvetomira Dumbalska, Michiel A. Bakker, Rachit Dubey

Rollup. Ten minutes of AI during practice was enough for lower unassisted performance when the AI was taken away. How you use it matters more than whether. Answer mode tanks, hint mode holds.

- 1,222 participants across fraction problems and reading comprehension. Participants were randomly assigned to AI-assisted or control conditions. Everyone tested without AI.

- AI-assisted group solved fewer problems on the test and quit more often on the ones they did attempt.

- 61% said they used the AI for direct answers. That cohort showed the steepest decline in both performance and persistence.

- The hints-only subgroup did not show the same regression pattern. That's the more important finding than the headline.

The juice. My read: value and learning live in the friction. When you dissolve that friction with AI and then take the AI away, the skill it was standing in for gets less practice. If you're using LLMs and coding agents to solve problems faster, you need to be reaching for higher-friction work somewhere else to keep growing. If you're just using AI to do the same things you already do, you may be trading away some of the practice that keeps those skills sharp. Use AI to extend yourself. Learn something new. Push into directions you wouldn't have gone.

"Machines of Loving Grace"

AI · ESSAY · Dario Amodei, Anthropic

Rollup. Dario's claim: powerful AI compresses 50 to 100 years of biology into 5 to 10. The constraint isn't compute. It's the speed of the physical world and biological complexity.

- "Country of geniuses in a datacenter" is Dario's frame for powerful AI, operating across biology, programming, math, and engineering.

- Speculative targets: reliable prevention of nearly all natural infectious disease, 95%+ cancer mortality reduction, CRISPR-descendant cures for most genetic disease.

- Dario sketches lifespan doubling to roughly 150 years. Existing drugs already extend max lifespan in rats by 25 to 50%.

- 10x the rate of major biological breakthroughs, bottlenecked by experiment latency and serial dependence, not by thinking speed.

The juice. An old piece I'd somehow never read. I come from a biophysics background, and didn't know Dario did too.

I did mitochondrial research at the University of Maryland over a decade ago. Lately I've been mapping where AI can compress medical research timelines the way Dario describes here. That's why I started bioidle.dev as a separate research track.

Open source died in March. It just doesn't know it yet.

SECURITY · SUPPLY CHAIN · Dan Lorenc, Chainguard

Rollup. Five supply-chain attacks in twelve days in March. Lorenc argues the default mitigation stack is weaker than people want to admit against compromised distribution paths.

- Axios and LiteLLM were part of the same March supply-chain wave, with stolen credentials and social engineering in the chain.

- Axios' scale made the blast radius ugly. The malicious versions were available briefly, but Axios is measured in tens of millions of weekly downloads.

- Pinning and scanning are not enough by themselves. Lorenc's point is that those controls assume too much about the upstream distribution path.

- Rebuild from reviewed source is Lorenc's only remaining trust model. Or pull from a rebuilt-from-source root like Chainguard's catalog.

The juice. Lorenc isn't saying open source is broken. He's saying the consumption model is. Scan-and-pin is a fine defense against a single compromised artifact; it's not a defense against a compromised maintainer account or poisoned distribution, which is what March actually was. If you're writing an SBOM requirement this quarter, decide whether "pinned hash" means "pinned to a maintainer-signed commit I reviewed" or "pinned to whatever CI grabbed last week." Those are different control states.

GitHub Commit Autopsy

SECURITY · FORENSICS · ramimac, High Signal Security

Rollup. A commit impersonating Vercel CEO Guillermo Rauch appeared in actions/checkout without ever being merged, thanks to cross-fork object sharing and GitHub's trust-by-display model.

- Cross-fork object sharing means any commit in a fork is reachable from the upstream repo's object graph, even if never proposed as a PR. The surface is much larger than "what got reviewed."

- Client-side author metadata is the exploit surface. An attacker can spoof git config identity so GitHub renders the commit with Rauch's avatar and profile.

- Timezone manipulation is a separate tell. The page points to xz-utils as a distinct example of clock-hopping metadata, not as evidence tied to the Vercel imposter commit.

- Mitigation is local. Enable vigilant mode plus GPG/SSH verification on pull, because the server-side UI can still mislead you.

The juice. Rami (ramimac) puts out high-signal security research, consistently. Got to catch his Shai-Hulud talk at Unprompted earlier this year and the bar's only gone up. This piece is a clean reference for what malicious commit metadata actually looks like in the wild, and I want it handy for future repo-provenance work.

Seeing like an agent: how we design tools in Claude Code

AI · CRAFT · Thariq Shihipar, Anthropic

Rollup. Tool design for agents is an art, not a science. The tools your model needs depend on what it can already do, and those abilities keep changing under you.

- Three attempts at elicitation before the AskUserQuestion tool won. Editing ExitPlanTool confused Claude (plan and questions competing). Modifying output format wasn't guaranteed (format drift). A dedicated tool that blocks the loop until the user answers was what stuck.

- TodoWrite → Task Tool is the honest admission. Todos needed 5-turn system reminders early on. As models improved, reminders became limits and subagent coordination broke, so Tasks replaced Todos with dependencies and cross-agent sharing.

- Grep replaced RAG for codebase search. RAG was fast but fragile and required indexing. Giving Claude the Grep tool let it build its own context, which it turns out to be better at than being spoon-fed pre-chewed chunks.

- ~20 tools in Claude Code and the bar for adding a 21st is high. The Claude Code Guide subagent is the progressive-disclosure answer to "we need to teach Claude about itself without adding more tools."

The juice. The part I keep coming back to: the scaffold you build for one model generation quietly becomes a cage for the next. Thariq's TodoWrite-to-Task story is the cleanest version of that I've seen written down. Before you ship any new tool, ask whether it's something the current model needs or something older models needed. Different answer.

Vercel Sandboxes push agent microVM infra

INFRA · AGENTS · Vercel

Rollup. Vercel's bet on agent-exec infra: Firecracker microVMs, Active CPU pricing, and sandboxing tied into the broader Fluid Compute stack. Same week Cloudflare is shipping Sandboxes GA and Mesh. Two different visions of where agent code should actually run.

- Firecracker microVMs under the hood. Vercel describes sandbox startup in milliseconds.

- Guillermo Rauch and Malte Ubl are claiming the top ComputeSDK benchmark result. I'm treating that as Vercel's benchmark claim, not an independent measurement.

- Unified Fluid Compute stack across Sandbox, Builds, and Functions. Perf wins in one shape up across the others.

- Persistent sandboxes are in beta, according to Ubl, with programmable firewall controls on the roadmap.

- Billed on active CPU time, not I/O wait. Vercel claims up to 95% lower cost on bursty or I/O-bound workloads.

The juice. Personal bias up front: I still treat hosted sandboxes as a design tradeoff, not automatic isolation. The perf and DX wins from Cloudflare and Vercel are real, but I would test either one against the actual job before putting sensitive agent workloads there. Cloudflare's bet is Workers-native: persistent isolated environments with Mesh tying agents into private networks. Vercel's bet is Firecracker microVMs on a unified compute stack. Different answers to where agent code should run.

Building a CLI for all of Cloudflare

INFRA · DEV · Matt Taylor, Dimitri Mitropoulos, Dan Carter, Cloudflare

Rollup. Cloudflare is rebuilding Wrangler toward a single cf command for its broader API surface: ~3,000 HTTP API operations across 100+ products. The interesting piece isn't the CLI. It's Local Explorer.

- TypeScript schema generator is what Cloudflare built internally instead of leaning on OpenAPI, because OpenAPI couldn't represent the full CLI plus config plus binding surface. OpenAPI export comes out the other side.

- Local Explorer (open beta April 13) mirrors KV, R2, D1, Durable Objects, and Workflows as a local HTTP API at

/cdn-cgi/explorer/apiunder wrangler or the Vite plugin. - Agent-friendly pitch. Point a coding agent at that URL and it gets an OpenAPI spec against your local Cloudflare resources, so deterministic local testing finally works.

npx cfornpm install -g cfinstalls the Technical Preview. Feedback via the Cloudflare Developers Discord.

The juice. Local Explorer is the actually-useful piece for anyone building on Cloudflare. Most agent-dev stories break at "now reach the local dev resources deterministically," and this closes that loop. The typed-schema-over-OpenAPI call is also worth watching for anyone shipping agent-consumable APIs.

The Folder Is the Agent

AI · TOOLING · Kieran Klaassen, Every

Rollup. Three months of trying to make agent swarms work, then the punchline: the folder is the agent. A project folder with a well-tuned CLAUDE.md plus skill files is the specialist. You don't need an orchestration layer.

- 44 folders-as-agents in Kieran's workflow, each with specialized context built over months.

- Two-command dispatch layer. No LangGraph, no CrewAI, no MCP orchestration service. Just context-pinned folders and one routing step on top.

- Manual routing replaced with a dispatcher that routes work into the right folder.

- Model is commodity; folder carries the expertise. Swap models and the specialization persists.

The juice. Maps onto how I'm using Claude Code in this repo. Kieran pitches folders-as-agents as a general principle, not just his workflow. I think he's right for solo and small-team work, less sure for teams that need explicit state machines or reliability guarantees. Either way, the broader signal I take from it: context engineering is doing more work than model choice in 2026, and a lot of "agent framework" shopping is solving a problem most people don't have yet.

OpenAI Frontier agents: product + platform deep dive

AI · INDUSTRY · The Batch, DeepLearning.AI

Rollup. OpenAI's enterprise move is managing agents, not only building more of them. Frontier is a platform for agent identity, data access, evaluation, and billing across whatever framework the agent was built in.

- HP, Intuit, Oracle, State Farm, Thermo Fisher, and Uber are listed by OpenAI as early adopters. BBVA, Cisco, and T-Mobile are named pilots.

- Per-agent identity, permissions, and guardrails. Administrators can scope which employees or groups can invoke a given agent.

- Shared context across agents: data, tools, applications, workflow definitions. That's the "enterprise memory" story OpenAI hasn't pitched openly before.

- Promptfoo acquisition would bring AI red-teaming directly into the Frontier plane, subject to the deal closing.

The juice. Bundling Promptfoo into Frontier doesn't prove agent red-teaming is becoming platform-layer. It proves OpenAI wants it to. Worth watching as a signal, not a conclusion. What I'd bet on: enterprise auditors start asking for audit trails, access scoping, and red-team evidence living inside the agent console. Frontier is already pointing in that direction.

Also on my desk

Claude Code + coding agents

- AI · TOOLING · Claude Code through Vercel AI Gateway. Route via

ANTHROPIC_BASE_URL, and setCLAUDE_CODE_DISABLE_EXPERIMENTAL_BETAS=1or Bedrock and Vertex will reject the beta headers.

Cloudflare

(plus the cf CLI + Local Explorer writeup above)

- INFRA · DEV · Cloudflare Sandboxes are GA. Persistent isolated environments for agents with terminals, interpreters, live preview URLs, secure credentials, wake-on-demand, and snapshots.

- INFRA · NETWORK · Cloudflare Mesh. Same private network shape for laptops, servers, agents, and Workers. Cloudflare says Mesh is free for up to 50 nodes and 50 users.

Agent discourse

- AI · ESSAY · The Center Has a Bias by Armin Ronacher. The grounded critics have already used the tools for weeks. The "neutral center" hasn't.

Tweets

Companies already can't prioritize vulnerabilities effectively. Flooding the pipeline with thousands of AI discovered bugs and framing them all as weaponized exploits doesn't help.

Build skills first, fall back to tools. Make reusable agent behavior a skill first; reach for a dedicated tool when the skill shape breaks down.

Pi with zero extensions. Start vanilla. Add extensions only for recurring pain your workflow cannot absorb.

Cloudflare Sandboxes, secure credential injection: Workers act as a trusted proxy so agents can make authenticated calls without seeing raw credentials. Egress policies can be customized globally or per sandbox.

Agent harnesses aren't black magic. The framework layer is easier to overcomplicate than people admit.

Source: Theo (t3.gg) · @theo · Apr 13

Z/L Continuum at AI Engineer Europe: Ryan Lopopolo's "be token billionaires" versus Mario Zechner's "slow down and read the code."

Source: Alex Volkov · @altryne · Apr 13

Hugging Face Kernels on the Hub: precompiled GPU kernels for your GPU, PyTorch, and OS, with claimed 1.7x to 2.5x speedups over PyTorch baselines.

Interesting GitHub Repos

- 3b1b/manim. The math-animation engine behind 3Blue1Brown. If you've wanted to make a math explainer video, start here.

- NationalSecurityAgency/ghidra. NSA's open-source software reverse-engineering framework. Forked it this week because a FedRAMP thread touched firmware analysis.

- sxyazi/yazi. Blazingly-fast terminal file manager in Rust. Finally replaced ranger in my workflow.

- awslabs/agent-squad. Multi-agent orchestration from AWS Labs. Early, but interesting to compare against Anthropic's Agent SDK and OpenAI's Agents SDK now that all three majors have one.

- cisco-ai-defense/mcp-scanner. Scan MCP servers for threats and security findings. Wired it into the myctrl.tools build pipeline so trust isn't just vibes.

- OWASP/OpenCRE. Common Requirement Enumeration. The Rosetta Stone for mapping across security frameworks, and obviously adjacent to the scf-api work.

Upcoming Events & Talks

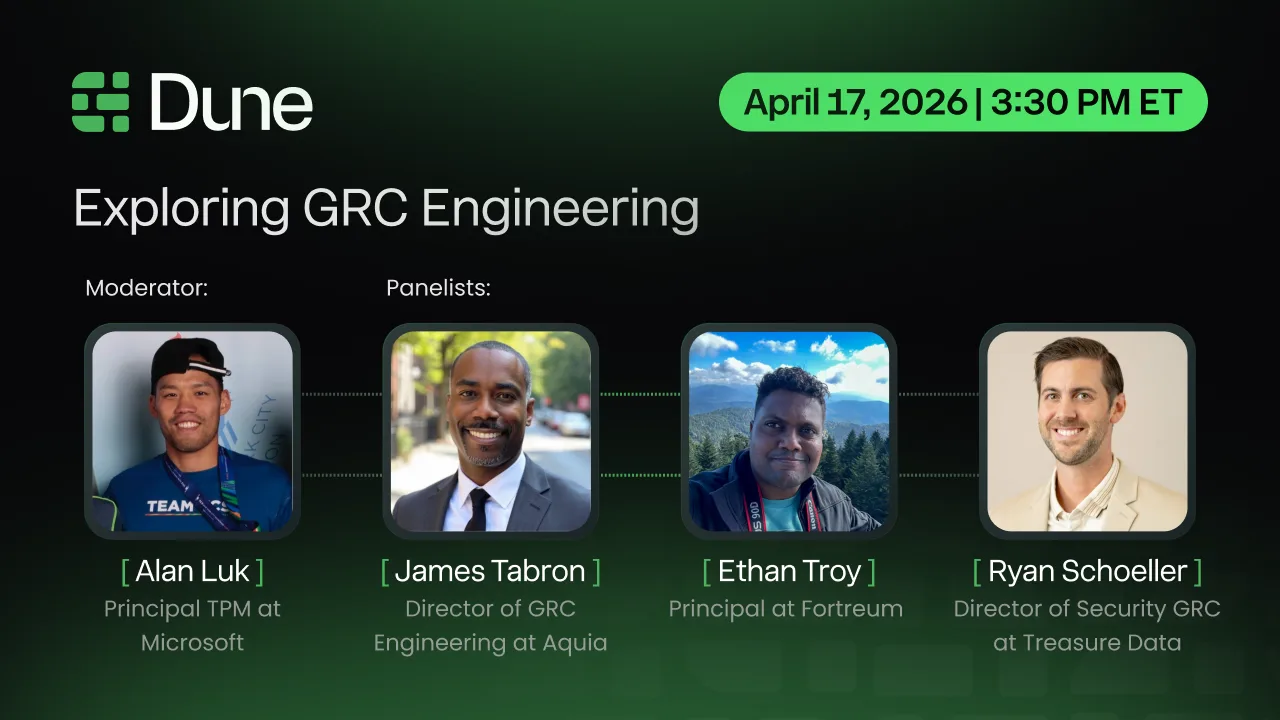

Fri Apr 17 · Exploring GRC Engineering

PANEL · DUNE SECURITY · Moderated by Alan Luk (Principal TPM, Microsoft)

Rollup. I'm on this virtual panel with James Tabron (Aquia) and Ryan Schoeller (Treasure Data). We're digging into what "GRC Engineering" actually means, and how it moves compliance programs past spreadsheets into automated, continuous risk management.

- Friday, April 17 · 3:30–4:30 PM ET

- Virtual. Free registration. Third of four webinars in the Dune series.

- Register here

The juice. GRC Engineering is the through-line for most of what I'm working on lately, and this panel pulls together people building that muscle from different angles. Come say hi.

- Issue #002 drops Tuesday, April 21. Same time, same format.

- Something you'd like me to cover? Reply to this email. It goes straight to my inbox.

Got forwarded this? Subscribe below so you don't miss the next one.

You're reading The Roll Up, a weekly Tuesday newsletter from hackIDLE. Forward freely. Archive · Unsubscribe

Don't miss what's next. Subscribe to Ethan Troy:

Add a comment: