AI/TLDR Daily Digest

April 18, 2026

|

|

Claude Design by Anthropic Labs — AI-Powered Visual Creation in Research Preview

Claude Design lets you generate polished prototypes and presentations by describing what you need — no design skills required.

What is it?

Claude Design is a new Anthropic Labs product that turns text descriptions into visual designs: product wireframes, realistic prototypes, pitch decks, and marketing materials. It is currently in research preview for Claude Pro, Max, Team, and Enterprise subscribers. Finished designs can be handed off to Claude Code for development.

How does it work?

Powered by Claude Opus 4.7, you describe what you want and Claude builds a first version. You refine through conversation, inline comments, and adjustment controls. For teams, Claude reads your codebase and design files to apply consistent branding automatically. Exports to Canva, PDF, PPTX, and HTML are supported.

Why does it matter?

Design iteration is a significant time sink for product teams without dedicated designers. Early adopters report major efficiency gains: Brilliant reduced complex prototype iteration from 20+ prompts in other tools to just 2 prompts; Datadog compressed week-long design cycles into single conversations.

Who is it for?

Product teams, founders, and developers who need design assets without a dedicated designer.

|

|

|

Canva AI 2.0 — Agentic Design Platform with Prompt-to-Design, Memory, and Workflow Integrations

Canva's biggest redesign since 2013: describe a goal, get a fully editable on-brand design — no template needed.

What is it?

Canva AI 2.0 is the most significant redesign of Canva since the platform launched in 2013. Instead of starting with a blank canvas or picking a template, users describe what they want in conversation and the platform generates fully layered, editable designs with brand guidelines applied from the first output. It launched April 16 as a research preview to the first one million users who access Canva's homepage. The underlying engine is the Canva Design Model — described as the first foundation model built specifically to understand design structure, hierarchy, and component relationships.

How does it work?

Four architectural components drive the system: Conversational Design generates full designs from natural language descriptions, keeping every element individually editable throughout. Agentic Orchestration handles multi-step goals — describe a complete multichannel campaign and the platform selects the right design tools and coordinates them. Layered Object Intelligence ensures every generated element is a real, editable object rather than a flat image. Living Memory retains brand guidelines, style preferences, and project context across sessions. App integrations with Slack, Notion, Zoom, Gmail, Google Drive, and Google Calendar support automated workflows like turning meeting notes into newsletters. Canva Code 2.0 now supports HTML importing.

Why does it matter?

Canva serves over 220 million users globally, most of whom are not professional designers. The shift from template selection to conversational generation means first drafts start on-brand and fully editable — a real change from existing template-based tools. For teams, Living Memory automates brand consistency across all outputs. Canva claims its internal AI models run 7x faster and 30x cheaper than comparable frontier alternatives, which matters for delivering this at 220M-user scale.

Who is it for?

Designers, marketers, and content teams who build visual assets daily.

|

|

|

HY-World 2.0 — Tencent's Open-Source Multi-Modal 3D World Generation and Reconstruction

Tencent's open-source world model turns text, images, and video into editable 3D scenes you can load into any game engine.

What is it?

HY-World 2.0 is Tencent's open-source multi-modal 3D world model that accepts text descriptions, single images, multi-view photos, or video clips and outputs real 3D assets — polygon meshes and Gaussian Splattings — rather than video. The output directly imports into Blender, Unity, Unreal Engine, and NVIDIA Isaac Sim. The released WorldMirror 2.0 component handles 3D reconstruction from multi-view or video input in a single forward pass.

How does it work?

The full pipeline runs four stages: HY-Pano 2.0 generates a 360° panorama from the input; WorldNav plans a camera trajectory through the scene; WorldStereo 2.0 expands the panorama into a navigable 3D Gaussian Splat world; WorldMirror 2.0 then reconstructs depth, normals, camera parameters, point clouds, and 3DGS simultaneously. The released WorldMirror 2.0 (~1.2B parameters) runs on a single GPU and is accessible via CLI or a Gradio web demo.

Why does it matter?

Most existing world models output video — a flat representation that can't be edited, imported, or navigated. HY-World 2.0 outputs actual 3D geometry that plugs directly into the tools game developers and roboticists already use. Open weights lower the barrier for robotics simulation, game level generation, and 3D content creation.

Who is it for?

3D artists, robotics researchers, game developers, and VR/AR engineers needing open-source 3D world generation.

|

|

|

Hermes Agent v0.10.0 — Tool Gateway for Self-Growing Multi-Platform AI Agents

Self-growing agent framework that learns from interactions and runs on every platform from CLI to WhatsApp.

What is it?

Hermes Agent is an open-source autonomous agent framework from Nous Research that builds up a personalized skill tree from experience. It runs across Telegram, Discord, Slack, WhatsApp, Signal, and CLI, connecting to 200+ models via OpenRouter. Each session accumulates skills — after completing a complex task, the agent crystallizes the execution path into a reusable skill that can be invoked in future sessions.

How does it work?

The agent runs a continuous learning loop: it creates new skills after complex tasks, curates memories with periodic nudges, and enables skills to self-improve during use. The v0.10.0 Tool Gateway connects to Nous Portal's subscription infrastructure so web search, image generation, TTS, and browser automation work out of the box without separate API key management. The agent supports cron scheduling and runs in Docker, SSH, or cloud backends like Modal and Daytona.

Why does it matter?

Most agent frameworks require managing a dozen separate API keys and integrations before you can do anything useful. Hermes Agent's subscription-based tool access, persistent cross-session memory, and broad platform support compress that setup into a single install. At 93K+ stars, the project has enough community adoption that the shared skill ecosystem is genuinely useful.

Who is it for?

Developers and power users who want a persistent personal agent that improves over time across multiple messaging platforms.

|

|

|

Cloudflare Agent Memory — Managed Persistent Memory Service for AI Agents (Private Beta)

Managed persistent memory for Cloudflare AI agents — agents can remember facts, events, instructions, and tasks across sessions without bloating the context window.

What is it?

Agent Memory is a Cloudflare-managed service that gives AI agents built on Cloudflare Workers a persistent memory layer that survives across sessions. Rather than stuffing everything into the context window, agents offload important information to Agent Memory and retrieve what's relevant on demand. It's currently in private beta as part of Cloudflare Agents Week.

How does it work?

Memories are classified into four types: Facts, Events, Instructions, and Tasks. Ingestion runs a multi-stage pipeline that extracts, verifies, classifies, and stores memories when the agent compacts its context. Retrieval runs five parallel channels simultaneously — full-text search, exact key lookup, raw message search, direct vector embeddings, and HyDE (Hypothetical Document Embeddings) — then fuses results with Reciprocal Rank Fusion to surface the most relevant memories. The service is built on Durable Objects (isolation), Vectorize (semantic search), and Workers AI (model inference). Agents can also call memory tools directly: ingest, remember, recall, list, and forget.

Why does it matter?

Context window degradation is one of the core unsolved problems in long-running AI agents — as the context fills up, important early information gets compressed away or lost. Agent Memory solves this by letting agents externalize memory and retrieve it selectively. The five-channel retrieval with RRF fusion is more robust than a single vector store approach. Critically, it runs on the same Workers infrastructure as the agent, so there's no extra service to manage or cross-region latency.

Who is it for?

Developers building long-running or multi-session AI agents on Cloudflare Workers.

|

|

|

NVIDIA Nemotron OCR v2 — Unified Multilingual OCR, 28x Faster Than PaddleOCR

A single unified OCR model from NVIDIA that reads six languages at 34.7 pages per second — no per-language models needed.

What is it?

Nemotron OCR v2 is NVIDIA's open-weights document OCR system, released April 17 on HuggingFace. It detects text regions, transcribes them, and reconstructs reading order — all in one pass. Unlike legacy OCR pipelines that require a separate model per language, a single Nemotron OCR v2 checkpoint handles English, Chinese (Simplified and Traditional), Japanese, Korean, and Russian simultaneously. Two variants are available: v2_english (54M parameters, word-level output) and v2_multilingual (84M parameters, line-level for mixed-language documents).

How does it work?

The architecture uses a shared RegNetX-8GF backbone (FOTS-based) whose computed features are reused by three components without reprocessing the image: a text detector (bounding boxes), a Transformer-based recognizer (transcription), and a relational model (reading order and layout structure). This shared-backbone design is the primary source of the 28x speed advantage over PaddleOCR v5. Training used 12.26M synthetic images generated from the mOSCAR multilingual corpus via a modified SynthDoG renderer. Available via pip install and a free interactive HuggingFace Space.

Why does it matter?

Production OCR pipelines typically require separate models per language, adding operational complexity and latency. A single unified checkpoint removes that overhead. At 34.7 pages/sec on an A100, real-time multilingual document processing is practical. The model ships under a permissive license with the full 12.26M-image synthetic training dataset (CC-BY-4.0) also released, which is useful for fine-tuning on domain-specific documents.

Who is it for?

Document processing engineers and ML practitioners handling multilingual text at scale.

|

|

|

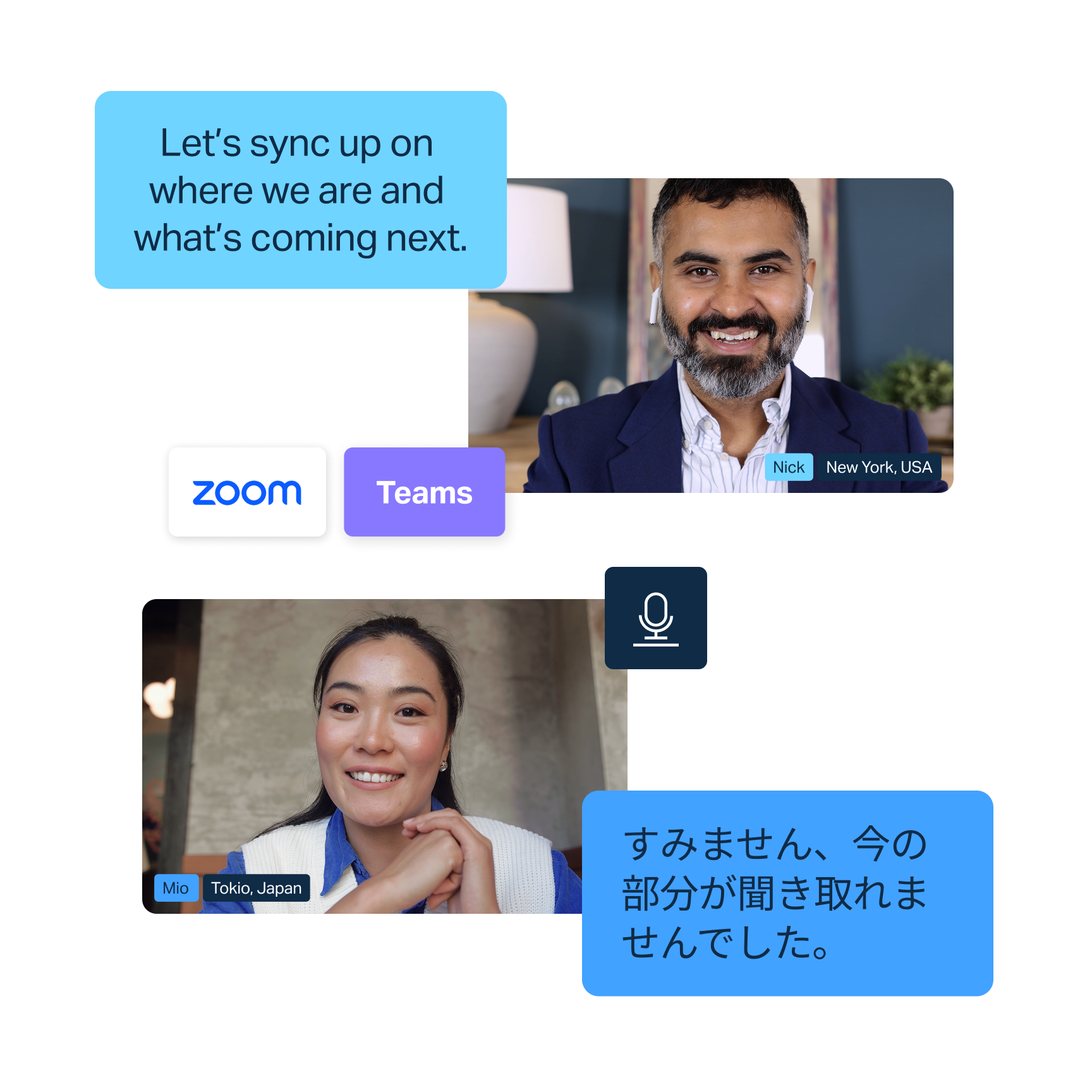

DeepL Voice-to-Voice — Real-Time Speech Translation Suite

DeepL extends its translation API to live voice — real-time speech translation for meetings, conversations, and custom apps across 40+ languages.

What is it?

DeepL Voice-to-Voice is a real-time spoken translation suite from DeepL, the company behind the translation API used by millions of developers. It launches with four products: Voice for Conversations (mobile and web, generally available now), Group Conversations (QR-code access for frontline teams, April 30), Voice for Meetings (Zoom and Teams captions, early access June), and a Voice-to-Voice API for embedding real-time speech translation in custom business apps. All 24 official EU languages are supported plus Arabic, Thai, Hebrew, Bengali, Vietnamese, Tagalog, and Norwegian — 40+ total.

How does it work?

The pipeline converts speech to text, applies DeepL's proprietary translation models tuned specifically for spoken language, then synthesizes speech output. DeepL's in-house LLM handles the fragmentation and incomplete sentences typical in live conversation. A terminology customization hub lets teams add product names, company jargon, and technical vocabulary so domain-specific speech translates accurately. DeepL is developing a fully end-to-end voice model (bypassing the text step entirely) as an ongoing AI Labs research project.

Why does it matter?

DeepL has 200,000+ business customers who already trust its written translation API. The Voice-to-Voice API is the most developer-relevant part: embed live speech translation directly into contact center software, kiosk apps, or field applications without building a multi-step STT → translate → TTS pipeline yourself. An independent evaluation by Slator found 96% of professional linguists preferred DeepL Voice over native translation from Google, Microsoft, and Zoom.

Who is it for?

Developers building multilingual voice apps and enterprises running global customer-facing operations.

|

|

|

All releases at ai-tldr.dev

Simple explanations • No jargon • Updated daily

|

|