Artanis #23: The Wrong Side of History

🙋 Ways you can help - PMs and CS teams managing prompts 🙋

We’d like to speak with people with the following profile:

1) They’re at a startup building an AI product

2) They’re a non-technical domain expert, like a Product Manager or Customer Service rep

3) They’re responsible for managing prompts or writing evals

Please get in touch if you know anyone who fits the bill!

🔬 A seamless way to write evals 🔬

We’ve come up with a new product! Now you can write systematic evals by just giving a thumbs up/down with a short reason. This hugely reduces the time and effort required compared to defining “correct” output from scratch. We’re very excited about this, because we think it gets to the core problem in AI development today - efficiently aligning output to human preferences.

📉 Progress in February 📈

Our metrics for February were:

Monthly revenue: $300 (unchanged)

Customers: 1 (unchanged)

Despite our efforts to bring down the engineering effort required to integrate Artanis, we missed our growth targets for the second month running. The main themes were:

1) Domain experts, such as PMs and CS teams, are becoming the frontline for dealing with AI output quality issues. They do this by changing the prompts, which doesn’t usually require code changes. The burden of dealing with AI quality issues is therefore shifting from engineers to domain experts. This shift is most obvious in the products that have the most traction.

2) Actioning human feedback isn’t a big timesink for most teams. Once an example has been flagged as a problem, it’s often relatively quick to find the root cause. The bigger issue was knowing which examples should be looked into, because AI teams often aren’t collecting human feedback to flag them.

3) We met several teams who resonated with the problem, but had recently vibe-coded an internal tool that analysed their traces. It seemed to be working well enough for them, so they’d pretty much solved their own problem. This shift in “buy vs build” is a broader trend in SaaS that’s being talked about a lot - we got on the wrong end of it.

Ultimately, we felt we were on the wrong side of history, particularly about the first theme. Ownership of AI prompts will shift from engineers to domain experts as LLMs get better, because the main problem is becoming alignment rather than intelligence. This is already happening and it’s most obvious in the companies with the most traction. While currently a niche, we believe that domain experts owning prompts will become the dominant paradigm. This is the market we want to build for.

💡 New persona, new problems💡

Domain experts who own prompts currently suffer from the “whack-a-mole” problem. They make changes to prompts to fix problems, but this often causes regressions in previous behaviour or new problems to appear. This leads to a feeling of going in circles, and a lack of confidence that prompt changes will make the AI better overall.

The best way to stay on top of this is to have systemic evals. However, there’s currently a cultural issue where responsibility for evals is falling between domain experts and engineering. Engineers don’t have the expertise to write the evals themselves. And domain experts can’t convert their intuitive notion of right/wrong into automated tests.

Until recently, we’d been quite pessimistic about solving this with software. We felt the main problem with evals was cultural, not technical. It’s currently laborious for domain experts to write good evals. While they can quickly spot when something looks wrong, it’s hard to articulate upfront what “good” output looks like, particularly as AI products often do many things at once. We felt the only route through was to more heavily incentivise domain experts to write evals.

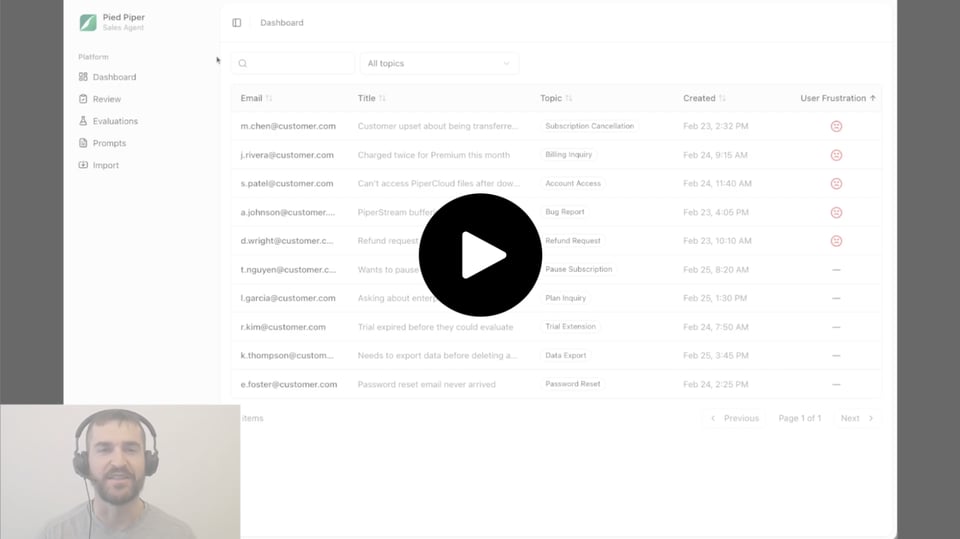

We can now make it seamless for domain experts to write evals, which may reduce the incentives needed. Curious about how? Watch our demo above!

🏹 Goal for March: zero-to-one on the new product 🏹

We’ve got a new product, so we need to pick up our first customer for it! This will either be an existing customer on a prior product we’ve built, or someone new.

🙏 Shout-outs 🙏

Special thanks for February go to:

Cait C - for going the extra mile

Henry M - for the intro to Danai

George T - for being very generous about our work with Convergence

Michael F - for connecting us to Beam

Polina M - for direct and clear feedback

Asita R - for the kind words and speaking opportunity

Ibrahim J - for being super responsive on intros

Kieran G - for being proactive with thinking of Manos

Simon and Dylan - for insights into the growing role of CS in AI development

Namid S - for bringing your whole team to a demo!

En Taro Tassadar,

Artanis Team