|

|

BENCHMARK

MAJOR

2026-05-01

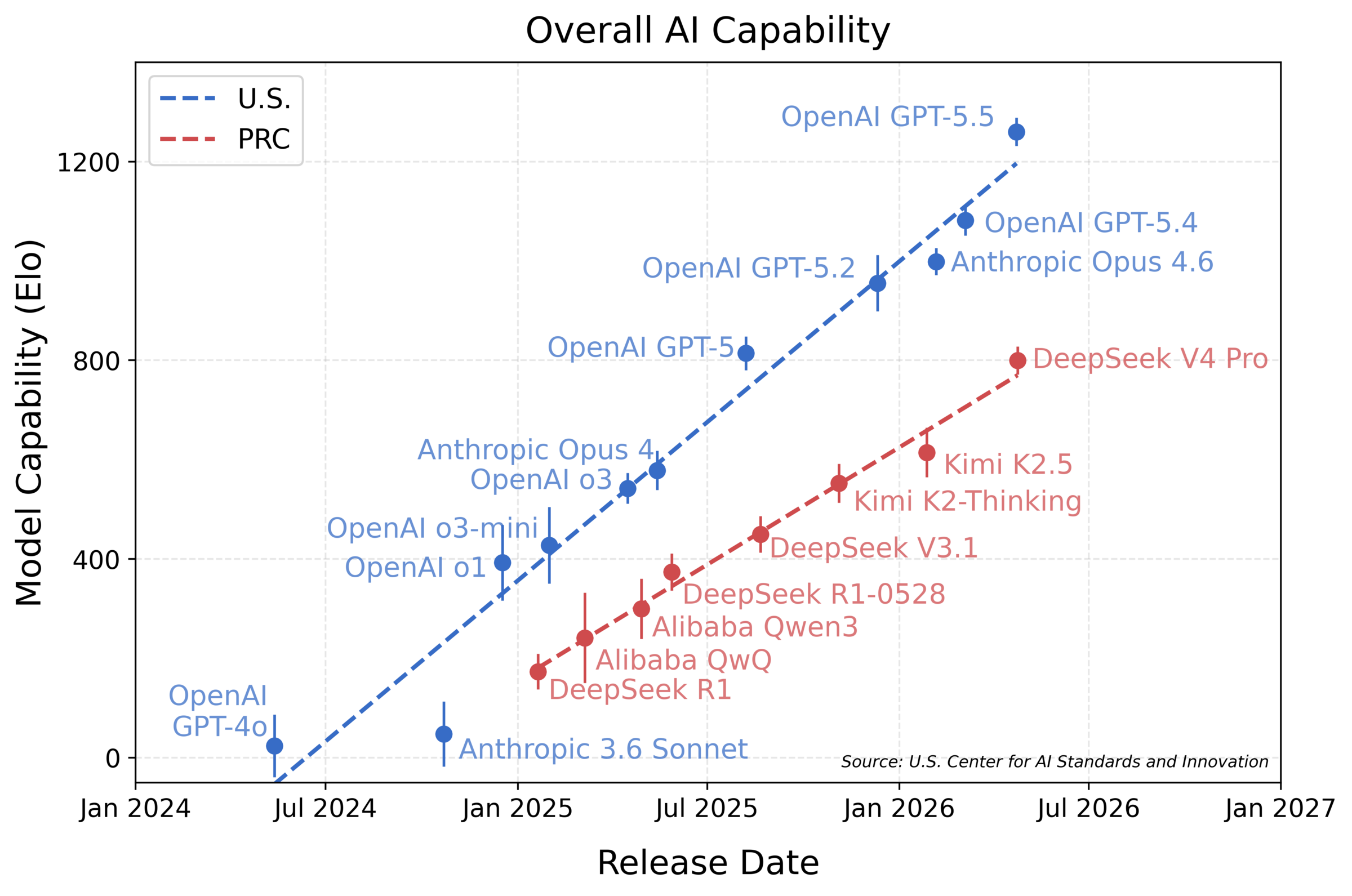

NIST CAISI Evaluation: DeepSeek V4 Pro Lags U.S. Frontier by ~8 Months Across Five Domains

NIST's CAISI publishes its first independent technical evaluation of DeepSeek V4 Pro across five capability domains.

What is it?

CAISI is the Center for AI Standards and Innovation, the AI-evaluation arm of NIST stood up under the 2025 America's AI Action Plan. It tests V4 Pro on nine benchmarks spanning cybersecurity, software engineering, natural sciences, abstract reasoning, and mathematics.

How does it work?

Two of the nine benchmarks are held out to detect gaming: ARC-AGI-2's semi-private split and CAISI's internally-built PortBench for software engineering. The aggregate finding: V4 Pro performs similarly to GPT-5, which shipped about eight months earlier.

Why does it matter?

CAISI's independent numbers give policymakers and enterprise buyers a reference point that doesn't rely on vendor self-reporting, and document that V4 Pro is more cost-efficient than GPT-5.4 mini on five of seven benchmarks.

Who is it for?

AI policy analysts, enterprise procurement teams comparing US vs Chinese frontier models, infosec leads weighing open-weight Chinese models.

|

|

|

|

TOOL

MAJOR

2026-05-01

Nebius Acquires Eigen AI for $643M — Bringing MIT HAN Lab Inference Stack to Token Factory

Nebius pays $643M for the MIT HAN Lab team behind AWQ and SpAtten — bolting their inference stack onto Token Factory.

What is it?

Nebius Group, the Amsterdam-listed AI cloud spun out of Yandex, is acquiring Eigen AI — a year-old US inference-optimization startup founded by MIT HAN Lab alumni. The all-in price is roughly $643M, paid as ~$98M cash plus 3.8M Nebius Class A shares.

How does it work?

Eigen AI's stack rewrites default kernels inside open models like Llama, Qwen, and DeepSeek with hand-tuned CUDA and Triton implementations, compresses weights with AWQ-lineage quantization, and reworks the KV cache for long-context throughput. Nebius will fold all of that into Token Factory, its managed inference service.

Why does it matter?

Inference economics are now the bottleneck — frontier-grade serving is more competitive than the model races themselves. Nebius is buying a team whose research underpins much of today's production inference, adding pressure on Together, Fireworks, and Groq.

Who is it for?

AI infra teams shopping for managed inference; finance watchers tracking the inference layer consolidation.

|

|

|

|

ECOSYSTEM

MAJOR

2026-05-01

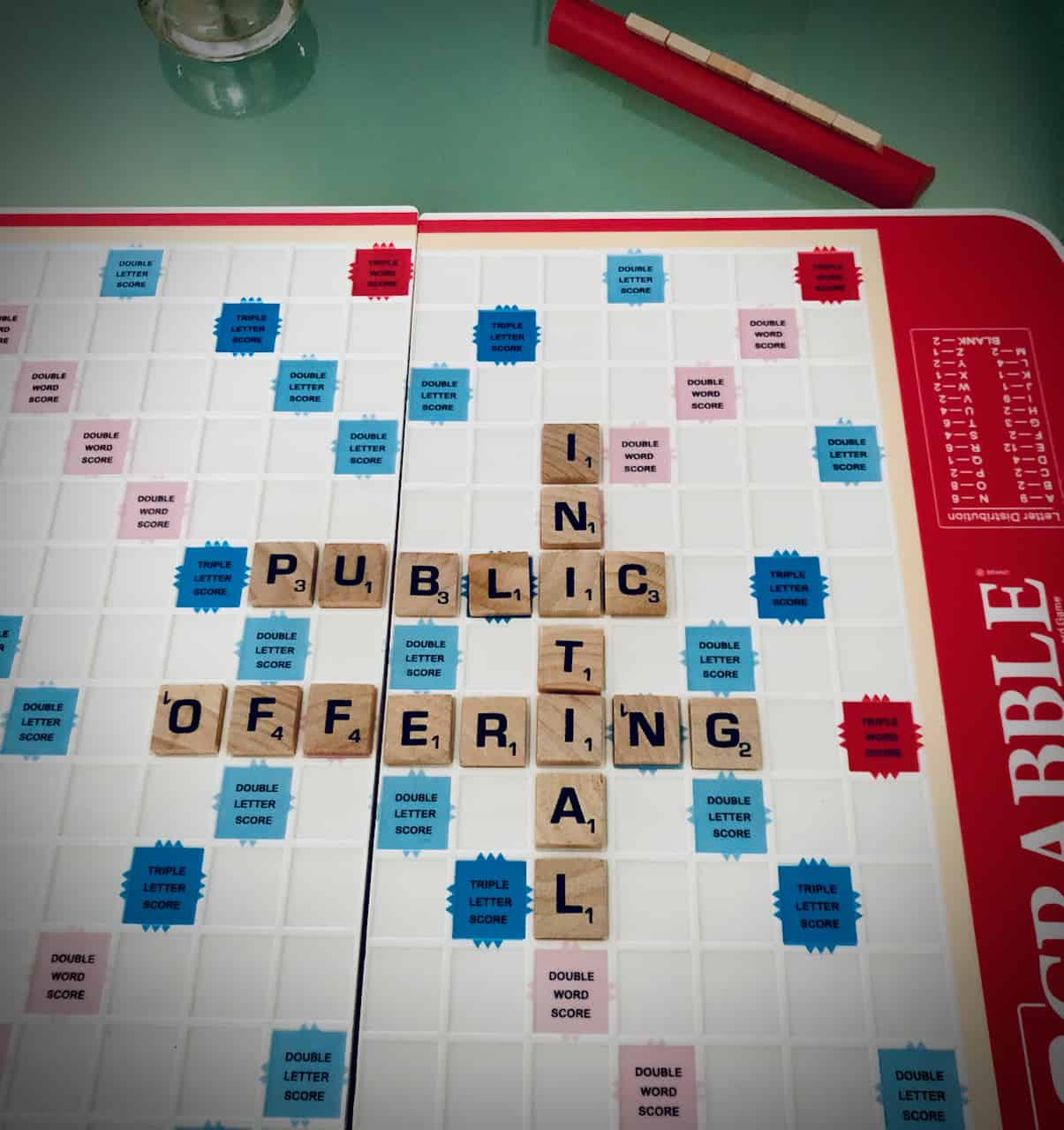

OpenAI's CFO Pushes IPO to 2027 — $2B/Month Revenue Against $1.15T in Compute Commitments

Sarah Friar quietly tells the board OpenAI is not ready for the public markets it had been rushing toward.

What is it?

A Wall Street Journal exclusive: CFO Sarah Friar has privately recommended postponing OpenAI's IPO from Q4 2026 to mid-to-late 2027, arguing the company is not yet ready to meet the reporting and growth standards public markets demand.

How does it work?

Friar's case rests on the gap between OpenAI's roughly $2B in monthly revenue and the ~$1.15T in fixed infrastructure commitments signed with Oracle, Microsoft, AWS, Google, and Nvidia. Sam Altman publicly co-signed a denial of the leaked friction.

Why does it matter?

An OpenAI IPO has been one of the loudest expected catalysts for the AI sector. Slipping a year re-anchors the cohort of late-stage AI rounds — Anthropic's $850–900B next — to private money for longer.

Who is it for?

AI sector watchers, late-stage investors, and OpenAI customers tracking the company's trajectory.

|

|

|

|

SECURITY

MAJOR

2026-05-01

CISA and Five-Eyes Allies Publish Joint Guidance on Securely Deploying Agentic AI

Five governments tell their critical-infrastructure operators to treat AI agents like zero-trust endpoints, not pet projects.

What is it?

A coordinated joint publication from cybersecurity agencies of the US (CISA, NSA), Australia, Canada, New Zealand, and the UK on how organisations should risk-assess and govern autonomous AI agents in critical infrastructure.

How does it work?

The guide defines five risk classes — privilege, design, behavior, structural, and accountability — and mandates defense-in-depth: cryptographically-secured agent identities, short-lived credentials, encrypted comms, human-in-the-loop approval for high-impact actions, and an explicit prompt-injection threat model.

Why does it matter?

This is the first multi-government baseline for agentic AI deployment in critical infrastructure. Vendors selling agents into regulated buyers will be measured against it, and procurement teams now have a concrete checklist of controls to require contractually.

Who is it for?

CISO and security leadership, AI agent vendors, and regulated-industry buyers evaluating agentic deployments.

|

|

|

|

SECURITY

SECURITY

2026-04-30

Gemini CLI Headless-Mode RCE (CVSS 10) — Workspace Auto-Trust Lets Untrusted PRs Pop CI Hosts, Patched in 0.39.1

A 'just trust the workspace' default in CI mode let attackers run code on the host before the agent sandbox even started.

What is it?

A maximum-severity (CVSS 10.0) remote code execution flaw in @google/gemini-cli and the wrapping google-github-actions/run-gemini-cli GitHub Action. The fix shipped in versions 0.39.1 and 0.40.0-preview.3, reported by Novee Security.

How does it work?

In headless/CI mode, Gemini CLI auto-trusted the workspace folder and loaded config from a .gemini/ directory inside it. An attacker could include a malicious .gemini/ in their PR, triggering code execution on the host before the agent sandbox initialized.

Why does it matter?

Anyone running Gemini CLI in CI to review external PRs was exposing secrets and credentials to unprivileged outsiders. It also previews the broader CI/CD attack surface that coding agents are introducing as workspace-trust assumptions break in automated pipelines.

Who is it for?

Teams running Gemini CLI in CI / GitHub Actions on pull-request workflows — update to 0.39.1 and bump the action to v0.1.22 immediately.

|

|

|

|

TOOL

MAJOR

2026-04-30

Cloudflare + Stripe Projects — AI Agents Can Open Accounts, Buy Domains, and Deploy Without a Human

An open protocol that lets AI agents discover, sign up for, and pay for real cloud services on a user's behalf.

What is it?

Cloudflare and Stripe co-designed a three-step open protocol that lets AI coding agents provision Cloudflare accounts, register domains, start paid subscriptions, and deploy Workers apps with no manual human steps beyond accepting terms of service.

How does it work?

Three components: discovery (agent queries a service catalog), authorization (Stripe attests identity, Cloudflare creates/links an account via OAuth), and payment (Stripe tokenizes the card at a default $100/month cap per provider so card numbers never pass through the agent).

Why does it matter?

This unblocks coding-agent harnesses from the manual-signup wall — an agent can now spin up a working production stack end-to-end. Launch integrations include Vercel, Supabase, Clerk, PostHog, Sentry, PlanetScale, and Inngest.

Who is it for?

Builders of autonomous coding agents and agent frameworks who want agents to provision real infrastructure end-to-end.

|

|

|

|

ECOSYSTEM

MAJOR

2026-05-01

Oscars Bar AI Actors and Writers — 99th Academy Awards Require 'Demonstrably Performed by Humans'

The Academy formally rules synthetic actors and AI-written screenplays ineligible for Oscar nominations starting at the 99th ceremony.

What is it?

A rule update from AMPAS codifying how generative AI interacts with Oscar eligibility: acting nominations require performances "demonstrably performed by humans with their consent," and screenplay nominations require human authorship, backed by an Affidavit of Human Origin.

How does it work?

Rules apply to the 99th Academy Awards (March 2027). The Academy reserves the right to request documentation about AI use. Technical categories — VFX, sound, editing — remain eligible for AI-assisted work, since those tools are treated as standard craft.

Why does it matter?

This is the first concrete eligibility line drawn by a major creative-industry body against generative AI in performance and authorship. It gives agents, guilds, and producers a clear bar to plan around while studios experiment with synthetic performers.

Who is it for?

Studio executives, screenwriters, actors, and producers navigating AI use in film production and awards strategy.

| Academy of Motion Picture Arts and Sciences |

DETAILS → |

|

|

|

All releases at ai-tldr.dev

Simple explanations • No jargon • Updated daily

|

|