A screenwriter defends their use of AI

But if the only way a screenwriter can manage their time is by using AI, is it really a profession worth pursuing?

“The Comeback” premiered on HBO in 2005 as a Hollywood satire about the travails of a middling sitcom actress named Valerie Cherish (Lisa Kudrow). She’s been out of work for a few years, but when she’s asked to audition for a new series, it comes with an awkward stipulation: If she’s cast, a reality TV crew will follow her around during the process to capture her “comeback."

Desperate for validation, she is ridiculous and forever failing to read the room, but she also has an admirable and old school idea of professionalism — which means she’s usually some combination of wrong and right in any given moment. It’s horrifying! But also very, very funny!

The second season premiered 10 years later. Valerie’s former showrunner, an odious guy who made her life miserable while shooting the aforementioned sitcom, turned that experience into a prestige dramedy and Valerie, reluctant to turn down an opportunity no matter how humiliating, agreed to play this semi-fictionalized version of herself.

A decade-plus later, “The Comeback” has returned once again — rejoice! — premiering last week with a season focused on AI.

A TV network looking to revive the multi-camera comedies of old hires Valerie to star in her own sitcom. Even though two showrunners have their names on the scripts, the episodes are actually written by AI. Chaos and absurdity ensues.

So far, “The Comeback” is the only show to tackle AI with any kind of gusto. The first season of “The Studio,” another skewering of Hollywood which premiered last year on Apple (and won a boatload of awards), pointedly did not, and it’s one of the reasons I found it so toothless. You can’t satirize Hollywood by ignoring one of the biggest threats to the industry.

There is no consensus about AI, but I think the issues are clear.

Many who work in TV and film feel otherwise, including Dara Resnik, a longtime screenwriter whose credits include “Castle,” “Jane the Virgin” and the Netflix version of “Daredevil” TV series.

She recently posted some thoughts about AI in a newsletter titled “Everyone is Lying” and acknowledges that it’s often a contentious conversation:

So when the subject comes up now, I parse words. I speak carefully. I match the room.

But no more:

I’m done doing that.

Here’s her background:

I’ve been a WGA member for 20 years. I’ve run two shows. I’m currently in active development on pilots. I’m contextualizing because what I’m about to say is going to irritate some people, and you should know I have skin in this game.

Fair enough. So let’s dig in.

Her point of view

The uncomfortable reality is that usage is pervasive and most people know it and are scared to name it.

That’s really interesting. I also think many viewers already suspect AI is being used by screenwriters.

Resnik brings this up because the WGA (the Writers Guild of America, the union for screenwriters) will soon begin negotiating its next contract with the studios.

Where does Resnik stand on AI? “I don’t let AI write for me,” she says.

But she does use it.

Let’s go through her arguments:

I brain-dump everything — ideas often in no particular order, half-formed research, fragments — and instead of waiting until I have the energy to sit alone and organize it into coherence, AI does a lot of that work for me.

“I brain-dump everything into AI” is a direct contradiction of “I don’t let AI write for me” because writing is fundamentally thinking.

I want to emphasize this. All the thinking that happens before you begin writing a draft — organizing your thoughts into coherent themes and ideas — is, in fact, writing.

Here’s Resnik describing one example of how she used AI when writing a pitch for a bioterrorism thriller:

I had a comprehensive pitch, but I couldn’t “solve” the medical problem in the third act of the season — I knew where I wanted to end medically, but I couldn’t figure out how to treat the fictional pathogen. And while I have several talented doctor friends at my disposal, there’s a difference between actual medicine and TV medicine. I gave Claude the setup, the medical parameters, told it how I wanted the season to end, and let it do the heavy lifting on the theoretical TV medicine.

The heavy lifting? Figuring how to solve a fictional problem? This is writing.

We’re not having an honest conversation unless we acknowledge this.

Here’s more:

Sometimes [the AI] makes connections I wouldn’t have made. Sometimes I have to throw the whole thing out. But I don’t have to wait until after I finish the parent-teacher conference, advise my latest mentee, or do the dishes anymore.

Talking through ideas with other people can be beneficial and in some cases, that conversation might result in making connections the writer otherwise wouldn’t have made on their own. So I’m interpreting this as Resnik saying: It’s faster and more convenient to do that with AI.

But whatever connections AI is making will never be intentional. Or based on an understanding of the human experience. Part of the storytelling process has become randomized.

Will that ultimately feel hollow to audiences? I don’t know.

I’m a single mom, a showrunner, a professor, a mentor. Sometimes the only free time I have is at 1am. Percolating on 10 ideas while someone else makes sure my house is running is a luxury I don’t have, and frankly, it’s a luxury most women don’t have. AI gives it back to me. That feels like democratization in a world that has always asked women to work harder for less.

Does that mean AI use is only legitimate for women who are single parents who also have at least one other job in addition to being a writer?

Because framing this as a social justice issue — “democratizing a world that has always asked women to work harder for less” — stands out as the most disingenuous part of her argument.

There are numerous reasons AI use should concern us. They are ethical and practical.

Let’s go through some of them:

Human rights abuses

According to reporting from Reuters:

Researchers from the Distributed AI Research Institute identified instances of labor abuse for those that work as data labelers in the development of the large language models necessary to the development of AI systems. Data labelers often are paid low wages to perform repetitive labeling tasks, and much of this work is performed in regions outside the U.S.

Whatever time crunch issues Resnik describes, does that justify the use of AI when labor abuses are the material reality for people who make AI functional?

There is no such thing as moral purity when you exist in a society run by corrupt entities. Sometimes you have to buy products from companies you find reprehensible. Sometimes you’re employed by a company you find reprehensible. Everyone makes compromises they can live with. This can feel bad enough already, why add to that?

Theft

AI exists by virtue of scraping the internet to steal the work of actual human beings. That means authors, journalists, researchers, academics and, yes, screenwriters — some of whom might also be single parents juggling multiple jobs.

Do their livelihoods and efforts not matter if the tradeoff means a screenwriter with a busy schedule can “percolate on 10 ideas” more efficiently?

Environmental harms

AI data centers require a disproportionate amount of water and electricity, and that’s bad for everyone considering the state of the climate crisis. On a more day-to-day basis, it means higher rates passed onto consumers. And, in some cases, resources being diverted away from communities to service those data centers.

Guess who this affects the hardest? Black people.

Here’s a story from Essence:

AI is infrastructure. And like other forms of infrastructure in this country — highways, power plants, industrial corridors – has often been placed where political resistance is weakest. Too often, that has meant Black communities absorbing the costs while others reap the benefits.

And another from Capital B:

In more rural communities, residents near data centers are reporting that the water in their taps is brown and murky or does not drip out at all. The centers are also leading to at least 200 new power plants being built to meet the new energy demands of AI, according to an analysis of permit applications. Studies show that power plants are most likely to be constructed in Black neighborhoods and worsen the risks of cancer and respiratory disease.

How are these realities, without which AI would not exist, “democratizing,” unless you discount the humanity, experiences and well-being of Black people?

The U.S. has a long history of doing just that in the name of capitalism.

It’s a choice to reframe AI use as feminism, because that very obviously excludes Black women, who the world has always expected to work harder — and for much, much less.

Resnik’s inability (uninterest?) to seriously contend with that is as concerning as it is unpersuasive.

Do the ends justify the means?

In my opinion, no. The means are shitty, but so are the ends.

A journalist could offer a similar argument to Resnik’s: Why shouldn’t they just “dump” all the info they’ve gathered into AI and let it sort, organize and “write” the story? Why wait for a human to synthesize all that information themselves?

In fact, that’s what the Cleveland Plain Dealer is doing right now.

Jackson McCoy is a student journalist at Ohio University and this is what he wrote about the Plain Dealer’s choice:

Generative AI is easily ideologically swayed, racist and environmentally devastating. These are well-documented facts across research institutions and media outlets. It regurgitates other people’s words to create unoriginal, formulaic and weak writing.

To embrace generative AI is to embrace these faults; to not acknowledge these faults is to enshrine them in workplace practices.

These kinds of clear-headed ideals give me so much faith in the generation that’s coming up! It’s a rare sign of optimism that there are young people who understand and are pushing back the problems inherent with AI.

Because reporting a story is never just spitting out information. It’s a constant expression of decision-making: What information goes up top? Why is it going up top? How do you get across tone? Is there room for wit or humor? Are there sources whose statements are disingenuous, contradictory, or leave more questions than answers? How do you want to handle their quotes in order to give context to that? All of these issues need to be handled by a human being.

What’s fair?

One of Resnik’s arguments boils down to: If you can’t beat ‘em, join ‘em. That sounds like capitulation. Perhaps a more diplomatic word would be exhaustion.

Well, who isn’t exhausted right now?

But it’s mistake to accept that AI is a fait accompli.

This week OpenAI announced it is shutting down its Sora AI video app mere months after it was launched. According to the Hollywood Reporter:

Disney is also exiting the deal it signed with OpenAI last year, in which it pledged to invest $1 billion in the company and agreed to license some of its characters for use in Sora.

Here’s another announcement from the same company this week:

OpenAI has shelved plans to release an erotic chatbot “indefinitely” as it refocuses on its core products, following concerns from staff and investors about the effect of sexualized AI content on society.

Here’s how AI skeptic Daid Gerard frames this idea of so-called inevitability in a post titled: ‘AI is here to stay’ — is it, though? What do you mean ‘stay’?:

“you have to admit, AI is here to stay.”

Well, no, I don’t. Not unless you say what you actually mean. What’s the claim you’re making? Herpes is here to stay too, but you probably wouldn’t brag about it.

What they’re really saying is “give in and do what I tell you.”

The shortcuts and tradeoffs

Resnik is not wrong to point out the real stressors of everyday life. Women tend to shoulder a heavier load — mental and logistical — when juggling both professional and domestic obligations.

As an industry, Hollywood increasingly expects an unsustainable amount of unpaid work from screenwriters, with no promise that their efforts will see the light of day, let alone any kind of compensation.

But does the remedy lie in the exploitation of others?

“My use of AI hasn’t taken anything from anyone,” Resnik says. But that’s not true, and her definition of “anyone” is extremely narrow if it doesn’t account for the many people who are, indeed, adversely affected by AI. Are they merely abstractions, rather people she knows personally, like her assistant?

Here’s another way to put it: If the only way a screenwriter can manage their time is by using AI, is it really a profession worth pursuing?

Because I don’t think audiences will follow screenwriters down this road, not in the long run.

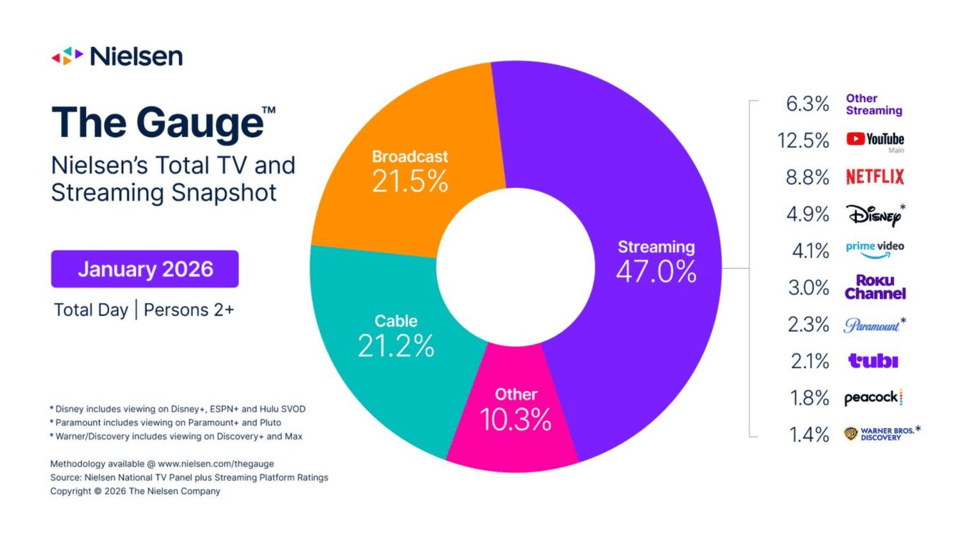

YouTube consistently outdraws all the other streaming platforms, even though it’s not really a platform focused on scripted comedy and drama.

Fewer films and TV shows are being made these days and that was inevitable — media companies were over-saturating our screens and overspending to do it — but we’re in an especially lackluster moment for television, where the frequent joke you hear is that a show might as well have been written by AI.

Maybe the dirty secret is that many already are, at least at some stage of the game. This isn’t how you keep current audiences engaged, or attract younger ones.

For executives, the longterm health of the TV and film industry doesn’t appear to be a focus or concern. Nor is cultivating and nurturing new talent. Lock in a few super-producers and bold-face names, ask them to churn out an assembly line of content, most of it based on IP, and you’ve just described much of the current landscape. This is a model that effectively cedes all effort to AI.

Choices

Pursuing a career in Hollywood is notoriously challenging. That’s always been the case. Very few people actually make it.

For a while, a decent chunk could at least get by. But the middle class of the entertainment industry is shrinking.

Even among the rarified upper levels of the business, I suspect those numbers will get smaller as time goes on. Everything is in free fall.

But I struggle to see how screenwriters can effectively argue for better pay when some are willing to offload any part of their workload to AI.

Here’s Resnik in her newsletter:

We need to start talking about how we use it, why we use it, and how to use it ethically. Or I promise you, the streamers, studios, and corporations are going to dictate that conversation for us.

I appreciate that she wants to have the conversation, because I think she’s right: The studios would prefer to be in charge of these outcomes.

The industry is using it. The question is whether writers are the only ones who opt out on principle while everyone above us in the food chain doesn’t. I’d rather eat than be eaten.

Is it eat or be eaten … or willingly giving yourself a flesh-eating disease? Or herpes, to use David Gerard’s metaphor. Because that’s what this kind of self-sabotage sounds like.

I’m not downplaying the challenges. It’s scary to contemplate how you’re going to pay your bills when your profession is predicated on continual uncertainty.

That’s true of many jobs right now, even outside of Hollywood. (The favored snarky insult to laid off reporters — “learn to code” — is an even sicker joke now, considering how many engineers have been laid off because of the supposed wonders of AI.)

Principles are hard to hold onto when the going gets tough. But here’s another way of looking at it: Using AI to formulate ideas is just admitting that your skills and talents can be replaced by AI.

How much should a show creator actually be paid if a portion of the work was done by AI? Will studios start asking the WGA to agree to contracts that allow for rebates depending on how much AI was used? Should awards bodies start scrutinizing for AI usage when determining nominee eligibility? How much would be allowable?

Cognitive surrender

A study was published this week with the title: How AI is Reshaping Human Reasoning and the Rise of Cognitive Surrender.

Cognitive surrender. Wow. That phrase should chill anyone, but especially writers.

Here’s what the study found: People confused AI’s reasoning for their own.

But we already know that regularly using AI is leading to kind of intellectual atrophy. And we know this because other recent studies have borne it out, as well, drawing a “likely connection between large language models (LLMs) — colloquially grouped under the banner of AI — and a direct cognitive cost, particularly when it comes to our ability to think critically.”

Even anecdotally, you can see how critical thinking has eroded in confluence with AI.

It’s hard to take a writer seriously when they won’t take the power of their own imagination seriously — the thing that makes you you, and not just some amalgamation of hundreds of thousands of other ideas spit out by a computer.

We should want individuality from people who write TV and film.

Writers should want it from themselves.